Perception as Safety, Not Just Performance

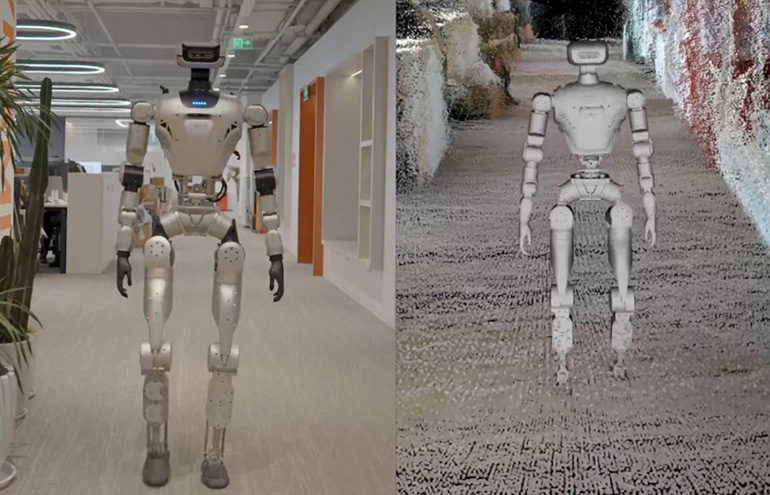

As humanoid robots transition from research settings to commercial and industrial deployment, the technical frameworks for how they perceive and navigate the world are becoming as important as their physical capabilities. At NVIDIA GTC 2026, RealSense demonstrated humanoid robot navigation with LimX Dynamics, showcasing a perceptual architecture that treats safety as a primary design constraint rather than an afterthought.

Nadav Orbach, CEO of RealSense, framed the challenge directly: humanoids operate in three dimensions, alongside people, in environments that are constantly changing. If robots are going to work safely beside humans, perception carries responsibility beyond raw sensors. It must function as the robot's visual cortex, enabling accurate localization, collision avoidance, terrain understanding, and stable, predictable motion in unstructured environments.

The Technical Foundation: CuVSLAM and Depth Sensing

The RealSense demonstration used depth cameras and NVIDIA's CuVSLAM — a GPU-accelerated visual simultaneous localization and mapping library — to enable real-time 3D mapping and localization on the LimX humanoid platform. SLAM is a foundational capability for autonomous navigation: it allows a robot to build a map of its environment while simultaneously tracking its own position within that map, without relying on external infrastructure like GPS or pre-installed beacons.

Depth cameras provide the range data needed to construct accurate 3D representations of the environment, detecting obstacles at various distances and heights. For a bipedal humanoid robot navigating terrain designed for humans — including steps, ramps, narrow passages, and cluttered floors — accurate 3D terrain understanding is essential for safe locomotion. A robot that only sees in 2D or has limited depth perception is more likely to misjudge obstacles and fall or collide with people and objects.

NVIDIA's CuVSLAM runs on the company's Jetson edge computing modules, increasingly embedded in robotics platforms to provide the computational power needed for real-time visual processing. By accelerating SLAM on GPU hardware, CuVSLAM can process depth data at rates fast enough for dynamic environments where positions of people and obstacles change continuously.

The Safety Imperative in Human-Robot Collaboration

The emphasis on safety at RealSense's GTC presentation reflects a broader shift in how the robotics industry is approaching deployment of capable mobile robots in shared human spaces. Industrial robots have historically operated in caged environments to prevent human-robot collisions. Collaborative robots introduced force-limited arms that can operate in closer proximity to people. Humanoid robots represent the next step: platforms designed to navigate freely through human environments and potentially interact directly with people.

This creates a different safety regime. A humanoid robot moving through a warehouse, factory floor, or retail environment must contend with unpredictable human movement, varied lighting, reflective surfaces that confuse depth sensors, and terrain that may shift unexpectedly. Falls are particularly dangerous — a humanoid robot of any meaningful size can injure a person it falls onto.

The LimX platform in the RealSense demonstration appears designed to address these scenarios through redundant perception — using multiple camera modalities and GPU-accelerated processing to maintain a reliable environmental model even under conditions that might defeat a less capable system.

The GTC Context: Nvidia's Robotics Push

NVIDIA GTC 2026 has featured an unusually dense cluster of robotics announcements, reflecting Jensen Huang's stated commitment to making robotics a major business pillar alongside data center AI. Nvidia's Jetson platform and Isaac robotics stack are positioning the company as the dominant compute provider for autonomous systems — a role analogous to what GPUs now play in AI training and inference.

The RealSense-LimX demonstration is one example of how Nvidia is enabling an ecosystem of robotics companies to build capable systems on its hardware and software stack. By providing CuVSLAM as a pre-built library optimized for Nvidia hardware, the company reduces the development burden on robotics firms and standardizes the computing layer — a strategy that has worked well in the AI data center market.

The broader implication is that humanoid robot navigation is approaching a level of maturity where commercial deployment in constrained industrial environments is becoming feasible, with safety-focused perceptual architectures like the RealSense approach providing the enabling infrastructure. The question is no longer whether robots can navigate human spaces, but whether they can do so reliably and safely enough to operate without constant human supervision.

This article is based on reporting by The Robot Report. Read the original article.

Originally published on therobotreport.com