The Physical World Gets an AI Upgrade

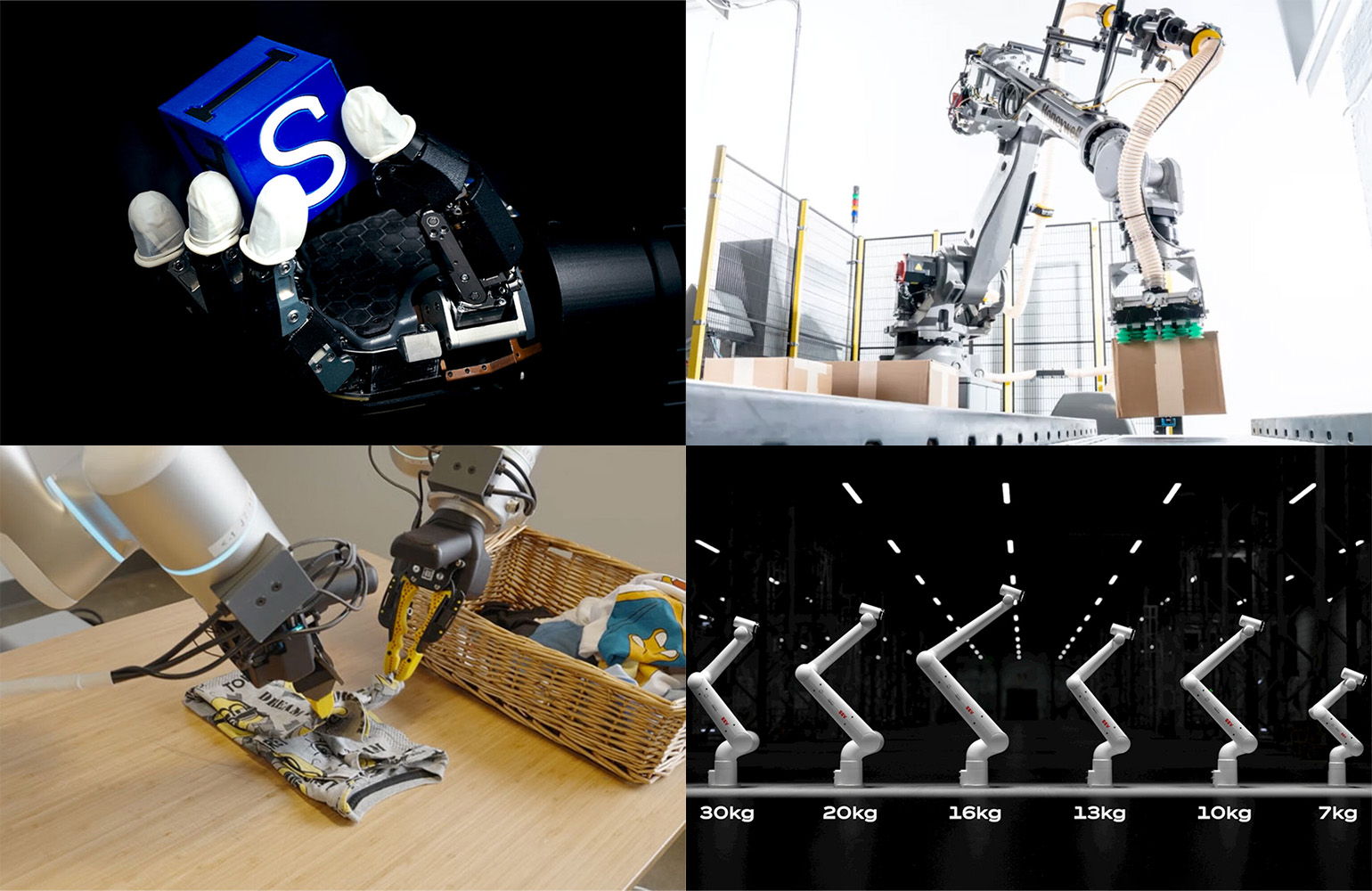

Nvidia's annual GTC developer conference has become the most important event in the AI industry calendar, and the 2026 edition was no exception. While previous years established Nvidia's dominance in data center AI computing, GTC 2026 marked a decisive pivot toward what CEO Jensen Huang described as physical AI — the deployment of AI intelligence into systems that interact with the physical world rather than just processing digital data. The announcements spanning autonomous vehicles, industrial robotics, and humanoid robot platforms represent a strategic expansion that could reshape multiple industries simultaneously.

The unifying thread is Nvidia's ambition to become the computational substrate of the physical AI era the way it became the substrate for the data center AI era. If the company succeeds, the AI chips, software platforms, and simulation tools it sells will be as central to the next generation of industrial robots and self-driving cars as its GPU clusters are to today's large language models.

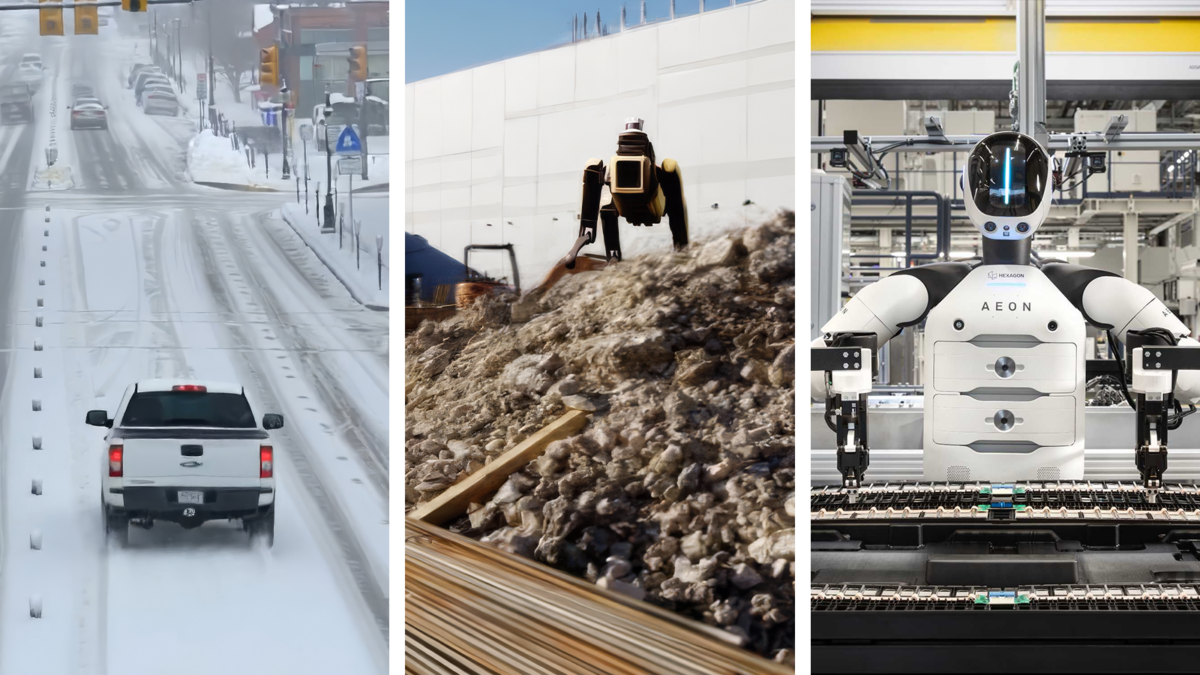

Autonomous Vehicles Hit Los Angeles Streets

Perhaps the most consumer-visible announcement was a partnership with Uber to deploy autonomous vehicles in Los Angeles beginning in 2027. The vehicles will use Nvidia's Drive Orin platform for perception and decision-making, running neural networks trained and tested in Nvidia's Omniverse simulation environment before deployment on public roads. The partnership positions Nvidia as a key infrastructure provider for the AV industry rather than an operator — the company supplies the computational intelligence while partners like Uber handle fleet management, mapping, and regulatory relationships.

Los Angeles presents a particularly challenging deployment environment for autonomous vehicles: complex intersections, aggressive driving culture, frequent construction, and dense pedestrian activity in commercial districts. Nvidia's decision to showcase its platform in LA rather than a more controlled environment reflects confidence in the robustness of its current generation of AV software and hardware.