Safety Moves Up the Stack for Connected Machines

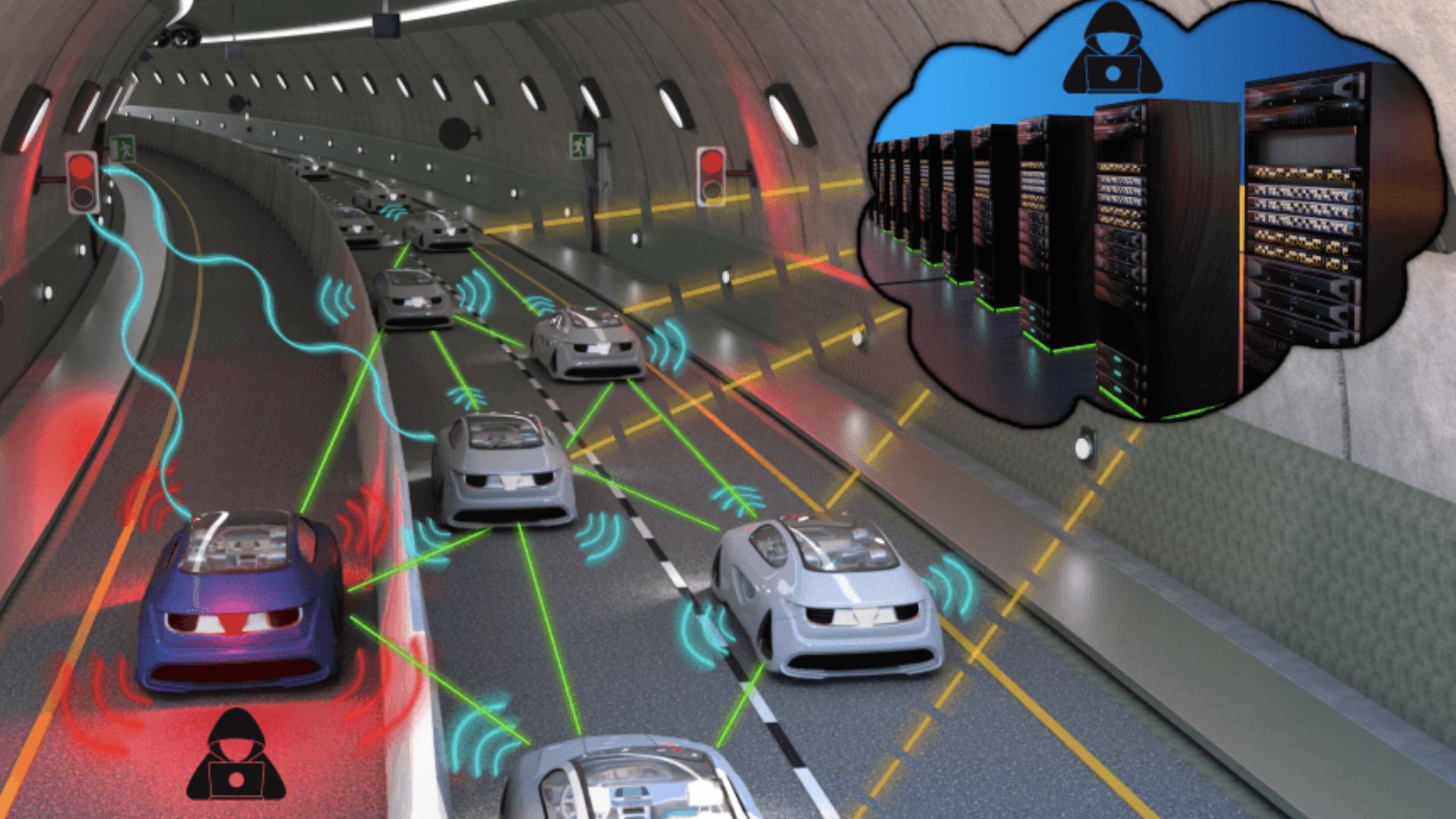

As autonomous systems spread from controlled industrial settings into streets, campuses, warehouses, and neighborhoods, one of the biggest unanswered questions is no longer whether a single machine can perceive its environment. It is whether many machines can coordinate safely when they are linked together. A report from Interesting Engineering says a US-led team has introduced a framework intended to make networks of robots, self-driving cars, and related connected systems safer.

Even from the limited details available in the candidate metadata and excerpt, the focus is clear: safety in machine networks rather than safety in one isolated device. That is an important distinction. The challenge of an autonomous vehicle or delivery robot does not end with onboard sensing and control. Once multiple agents begin sharing data, acting on common information, or depending on the behavior of other machines, the safety problem becomes system-wide.

A framework designed for that environment matters because networked autonomy creates new failure modes. A robot can make a correct local decision and still contribute to an unsafe overall outcome if shared information is delayed, inconsistent, or misunderstood by other machines in the network.

Why Network Safety Is Different

Traditional safety engineering often begins by asking how one machine fails and how those failures can be contained. Connected autonomy requires a broader lens. In a networked system, a problem can propagate. A bad signal, incorrect state estimate, or mistimed instruction can influence multiple agents at once. The result may be congestion, collision risk, or cascading confusion across the network.

That is why a formal framework is potentially more important than an incremental software patch. Frameworks help define how systems should be modeled, what assumptions are safe to make, and how interactions should be evaluated before deployment. In emerging sectors such as robot delivery, cooperative autonomy, and connected vehicle ecosystems, those questions are central to scaling from pilots to real infrastructure.

The report’s mention of self-driving cars and delivery robots is especially relevant. These are systems expected to operate around people, in dynamic environments, and often under incomplete information. Their performance depends not only on sensing and planning but also on the reliability of communication, coordination, and shared rules.

From Standalone Autonomy to Cooperative Autonomy

The development also reflects a broader shift in advanced mobility and robotics. For years, much of the engineering effort in autonomy centered on making individual systems more capable. Increasingly, the field is moving toward cooperative autonomy, where value comes from many machines working together. That can improve efficiency, coverage, and responsiveness, but it also raises the stakes for safety governance.

Consider a city where delivery robots, autonomous shuttles, roadside sensors, and logistics platforms all interact. The safety question is no longer just whether each component passes its own test. It is whether the full network behaves predictably under stress, degradation, or mixed traffic conditions. A framework meant to address that problem could therefore be relevant across transportation, logistics, municipal technology, and industrial automation.

It could also matter for regulation. Policymakers tend to move more slowly than technology deployment, especially when systems are novel and technically complex. Safety frameworks can give regulators and operators a common language. They can help clarify what should be measured, what should be audited, and what kinds of evidence ought to be required before systems are trusted at scale.

What to Watch Next

The candidate information supplied here does not include technical details of the framework itself, so the most defensible conclusion is also the most important one: researchers are treating network safety as a first-order engineering problem. That is a meaningful development in its own right.

The next question will be whether the framework proves useful outside academic or laboratory settings. For any approach in this area, real impact depends on adoption by developers, mobility operators, manufacturers, and eventually standards bodies. A good framework must be concrete enough to guide design decisions and testing, yet flexible enough to apply across different machine types and operating environments.

If that balance can be achieved, the payoff could be substantial. Networked robots and connected autonomous vehicles are likely to become more common, not less. As they do, public acceptance will hinge on whether safety keeps pace with capability. A framework that helps organizations reason about system-wide risk could become part of the invisible infrastructure that makes autonomy dependable.

That is why this announcement deserves attention. It points to a maturing field, one that is beginning to recognize that the hardest safety problems in autonomy may emerge not from a single machine going wrong, but from many machines trying to work together at once.

This article is based on reporting by Interesting Engineering. Read the original article.

Originally published on interestingengineering.com