The Bin Picking Problem

In the landscape of industrial automation challenges, deep bin picking occupies a special place: it is difficult, economically significant, and stubbornly resistant to the kinds of programmatic solutions that have made robotic automation successful in more structured applications. The task sounds simple — pick randomly oriented parts from a bin and place them correctly for the next step of a manufacturing process — but it combines several distinct technical challenges that, together, have made reliable automated solutions elusive for decades.

Parts in a deep bin are randomly oriented in three dimensions. They may be entangled, stacked, or partially obscured by other parts. The bin's walls create geometric constraints that limit robot arm approaches. Part surfaces vary in reflectivity, translucence, and texture in ways that complicate machine vision. And the physical act of grasping and extracting a part from a jumbled pile requires adaptive force control: applying enough force to grip reliably without damaging the part, while navigating the mechanical interactions with surrounding parts that shift as items are removed.

For manufacturers running multi-shift facilities with high part volume, this challenge represents a significant bottleneck and labor cost. Human operators can handle bin picking intuitively, drawing on visual perception and tactile feedback that they apply naturally and without explicit programming. But the labor cost and variability associated with manual bin picking — particularly in high-mix production environments where the part portfolio is large and constantly changing — makes automation compelling if the reliability bar can be met.

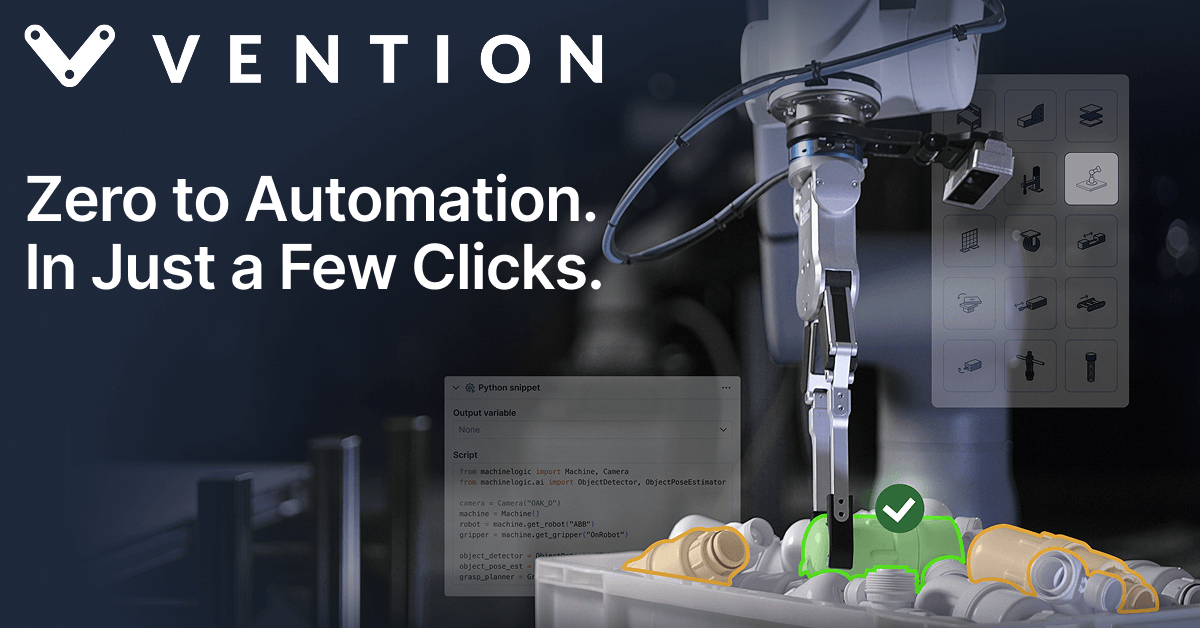

What Rapid Operator AI Does

Vention's Rapid Operator AI addresses the bin picking challenge through a combination of adaptive machine vision, learned grasping policies, and real-time force-feedback control. The system uses depth cameras and structured light to build a three-dimensional representation of the bin contents, identifying individual parts and their orientations within the jumbled pile. Grasping pose estimation — computing the optimal approach angle, gripper orientation, and contact points for a successful grasp — is handled by neural network models trained on large datasets of part images and successful grasp attempts.

The machine learning component is critical to the system's adaptability. Unlike template-based machine vision systems that require precise CAD models and break down when parts deviate from expected orientations, Rapid Operator AI's neural models can generalize from training data to handle novel presentations and new part geometries with relatively limited retraining. For high-mix manufacturers running dozens or hundreds of different part numbers, this generalization capability is the difference between a system that is useful across the production portfolio and one that works for a specific part family but requires significant engineering effort to extend to others.

The force-feedback integration addresses the mechanical challenge of extracting parts from a bin without damage. The system monitors gripper forces in real time, detecting when a part is entangled or when the extraction path is obstructed, and adjusting the robot's trajectory accordingly. This feedback loop allows the system to handle the stochastic mechanics of a bin pile — the cascading movements of parts as items are removed — without the brittle failure modes that plague open-loop bin picking systems when the real world deviates from the expected configuration.