From World Models to Robot Control

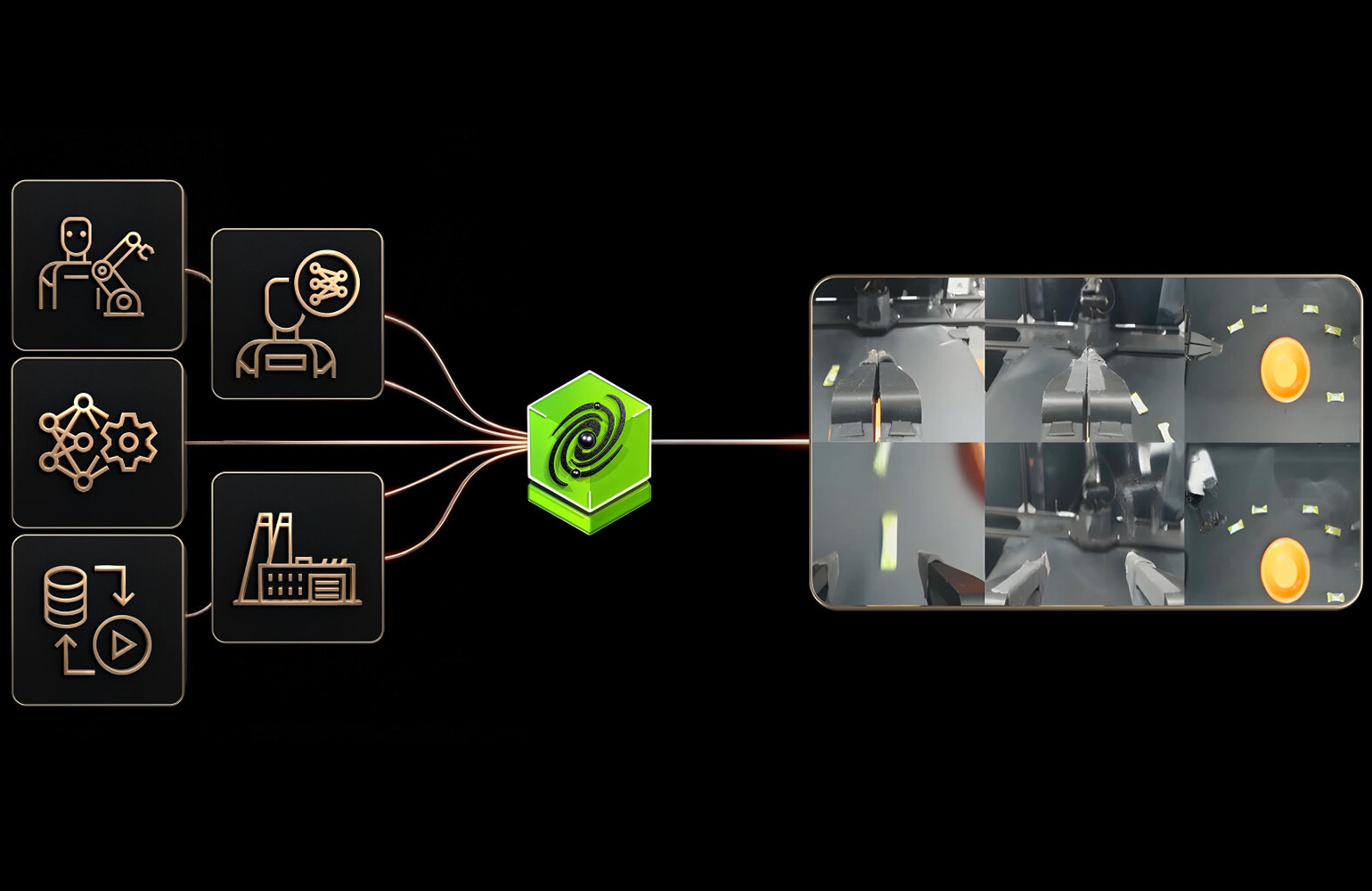

NVIDIA has announced Cosmos Policy, a new addition to its growing family of world foundation models that bridges the gap between environmental understanding and physical robot control. The model is built on top of Cosmos Predict-2, NVIDIA's existing world foundation model that generates predictions about how physical environments will change over time. Cosmos Policy takes those predictions and translates them into actionable control signals that robots can use to perform complex manipulation tasks.

The announcement represents a significant evolution in NVIDIA's approach to robotics AI. Rather than training robots to perform specific tasks through extensive demonstrations or reward engineering, Cosmos Policy leverages a generalized understanding of physical dynamics to enable more flexible and adaptive robot behavior. In principle, a robot equipped with Cosmos Policy should be able to approach novel manipulation tasks with a foundational understanding of how objects interact with each other and with the robot's own body.

How Cosmos Policy Works

At its core, Cosmos Policy is a post-training layer applied to the Cosmos Predict-2 world foundation model. Cosmos Predict-2 is trained on vast quantities of video data showing real-world physical interactions, and it learns to predict what will happen next in a given scene. Given an image of a table with objects on it, for example, the model can predict how those objects will move if pushed, lifted, or dropped.

Cosmos Policy builds on this predictive capability by adding a control policy that determines what actions the robot should take to achieve a desired outcome. The system works through the following process:

- Scene understanding: The robot uses its cameras and sensors to capture the current state of its environment, and Cosmos Predict-2 builds an internal representation of the scene's physical dynamics.

- Goal specification: The operator or a higher-level planning system specifies what the robot should accomplish, such as picking up an object, placing it in a specific location, or assembling components.

- Action generation: Cosmos Policy uses the world model's understanding of physics to generate a sequence of motor commands that will move the robot's arms and grippers to accomplish the goal.

- Real-time adaptation: As the robot executes the task, the system continuously updates its predictions based on new sensor data, allowing it to adjust its actions if the environment changes unexpectedly.

This approach is fundamentally different from traditional robot programming, where engineers manually specify every motion, or from pure reinforcement learning, where the robot must learn entirely through trial and error. By starting with a pre-trained understanding of physical dynamics, Cosmos Policy gives robots a significant head start on new tasks.

Why World Foundation Models Matter for Robotics

The concept of world foundation models has been gaining traction in the robotics and AI research communities for several years, but NVIDIA's Cosmos family represents one of the most commercially ambitious implementations of the idea. The core insight is that robots operating in the physical world need more than pattern recognition or language understanding. They need an intuitive grasp of physics, the kind of understanding that allows a human to predict that a glass placed on the edge of a table will fall, or that a heavy object requires more force to lift than a light one.

Traditional approaches to robot learning have struggled with this. Reinforcement learning can produce impressive results for specific tasks, but the knowledge often does not transfer well to new situations. Imitation learning requires extensive demonstration data for each new task. And manual programming is too inflexible for environments that change frequently.

World foundation models offer a potential path through these limitations. By training a single model on massive quantities of real-world video data, the resulting system develops a general understanding of physical dynamics that can be applied across many different tasks and environments. Cosmos Policy is NVIDIA's attempt to turn that general understanding into practical robot control.

Integration with NVIDIA's Robotics Ecosystem

Cosmos Policy does not exist in isolation. It is designed to integrate with NVIDIA's broader robotics software stack, including Isaac Sim for simulation, Isaac ROS for robot operating system integration, and the Jetson hardware platform for edge computing. This ecosystem approach is a key part of NVIDIA's strategy, because a control policy is only useful if it can run efficiently on the hardware that robots actually carry and communicate with the software systems that manage robot fleets.

NVIDIA says Cosmos Policy has been validated in both simulated and real-world manipulation tasks, including pick-and-place operations, object handoff between robot arms, and assembly tasks that require precise alignment of components. The company is making the model available to developers through its NVIDIA AI platform, with the goal of enabling rapid experimentation and deployment across a wide range of robotic applications.

Competitive Implications

The introduction of Cosmos Policy positions NVIDIA more aggressively in the robot control software market, which has traditionally been dominated by specialized robotics companies and research institutions. By offering a pre-trained world model with built-in control capabilities, NVIDIA is lowering the barrier to entry for companies that want to deploy sophisticated manipulation robots but lack the in-house AI expertise to build these capabilities from scratch.

Competitors in this space include Google DeepMind, which has its own line of robotics foundation models, and several startups working on generalizable robot learning. NVIDIA's advantage lies in its integrated hardware-software ecosystem and its massive installed base of GPU computing infrastructure, which provides the computational foundation needed to train and run models of this complexity.

For the robotics industry as a whole, the arrival of Cosmos Policy suggests that the era of general-purpose robot manipulation, where a single robot can handle a wide variety of physical tasks without task-specific programming, is moving from research aspiration toward commercial reality. How quickly that transition happens will depend on the reliability and performance of systems like Cosmos Policy in real-world deployments, a question that the industry will be answering over the coming months and years.

This article is based on reporting by The Robot Report. Read the original article.

Originally published on therobotreport.com