The AI debate is no longer centered on the same fears

Stanford’s latest AI Index is sharpening a divide that has been visible for months but is now becoming harder to dismiss: experts and everyday users are not talking about the same technology in the same way. As summarized by MIT Technology Review, the report shows a wide gap between expert optimism and public unease, particularly around AI’s impact on jobs, medical care, and the economy.

The numbers cited in the supplied text are stark. On jobs, 73% of U.S. experts are positive about AI’s impact, compared with only 23% of the public, a 50-point difference. That is not a minor disagreement about pace or regulation. It suggests two very different lived experiences of the current AI boom.

Why the disconnect is widening

One explanation raised in the source text is that experts and non-experts encounter AI in fundamentally different contexts. Heavy users, especially those using AI to code or accelerate professional work, are more likely to experience the technology as leverage. They see tasks completed faster, ideas prototyped more easily, and productivity gains that feel concrete. For them, AI can look like a powerful tool whose shortcomings are tolerable because the upside is immediate.

The broader public often sees something else. People worry about their paycheck, whether automation will compress wages, how AI will alter medical care, and even whether data-center growth will raise utility costs. Those concerns are not speculative in the same way that long-run debates over artificial general intelligence are speculative. They are grounded in ordinary economic insecurity and in the visible restructuring of work and infrastructure happening around the technology.

The industry’s whiplash problem

The Technology Review analysis also points to a second source of tension: AI produces contradictory signals. Models can achieve extraordinary results in some benchmarked tasks while still failing at apparently simpler ones. The article cites Stanford’s note that Google DeepMind’s Gemini Deep Think scored a gold medal in the International Math Olympiad yet is unable to read analog clocks half the time. Whether one reads that as a limitation of current systems or evidence of rapid uneven progress, it contributes to the sense that AI is both overhyped and transformative at once.

That contradiction helps explain why public opinion is so unstable. People are being told that AI will change the economy, medicine, and employment, while also seeing repeated examples of brittle performance. The result is not confidence. It is confusion. And confusion tends to harden into mistrust when companies continue to push deployment at speed.

What the AI Index suggests about the next phase

- Public skepticism is becoming a central political and market variable, not a temporary PR issue.

- Expert enthusiasm appears closely tied to direct, high-frequency use of AI tools.

- Economic concerns are more salient to the public than abstract AGI scenarios.

- AI’s uneven capabilities are reinforcing both excitement and backlash at the same time.

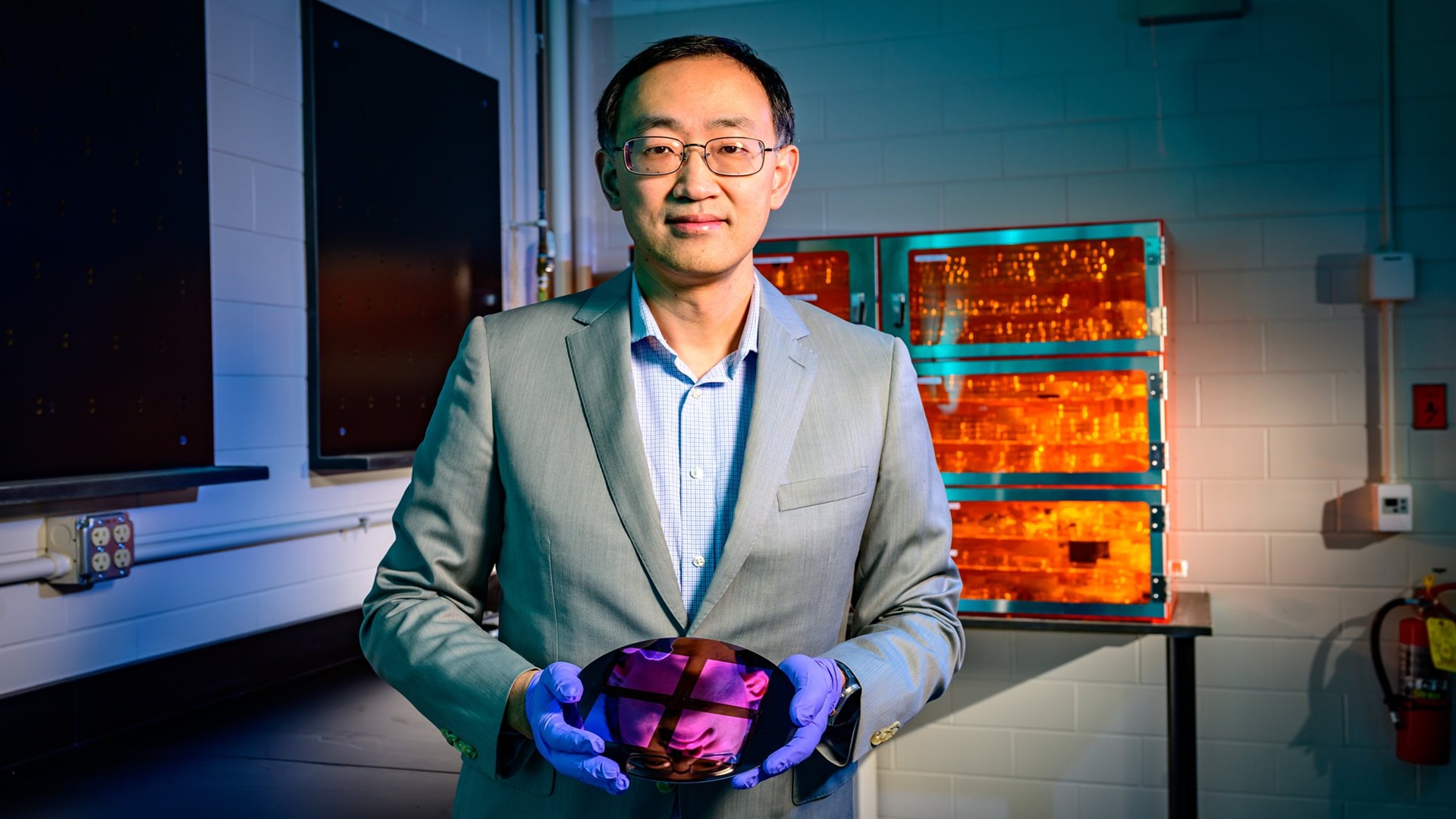

The source text also notes major structural facts behind the boom, including the United States’ vast data-center footprint and a global chip supply chain heavily dependent on TSMC in Taiwan. Those details matter because they show how AI is already reshaping real infrastructure, capital allocation, and geopolitical exposure. Public concern is therefore not disconnected from reality. It is responding to a technology wave that is materially changing systems people depend on.

For companies building AI products, the implication is uncomfortable but clear. Adoption cannot be treated as the only metric that matters. If the public increasingly believes AI is being built for insiders while costs and risks are socialized outward, the backlash will deepen regardless of technical progress. Better models alone will not close a trust gap rooted in employment anxiety, healthcare fears, and economic uncertainty.

Stanford’s AI Index does not resolve the argument over where AI is headed. It does something more important. It shows that the argument itself has split into separate realities. One is defined by power-user gains and frontier-model momentum. The other is defined by fragility, inequality, and the fear that the benefits will not be shared. Any serious conversation about AI policy or deployment now has to start there.

This article is based on reporting by MIT Technology Review. Read the original article.

Originally published on technologyreview.com