Voice AI feels natural only when the network disappears

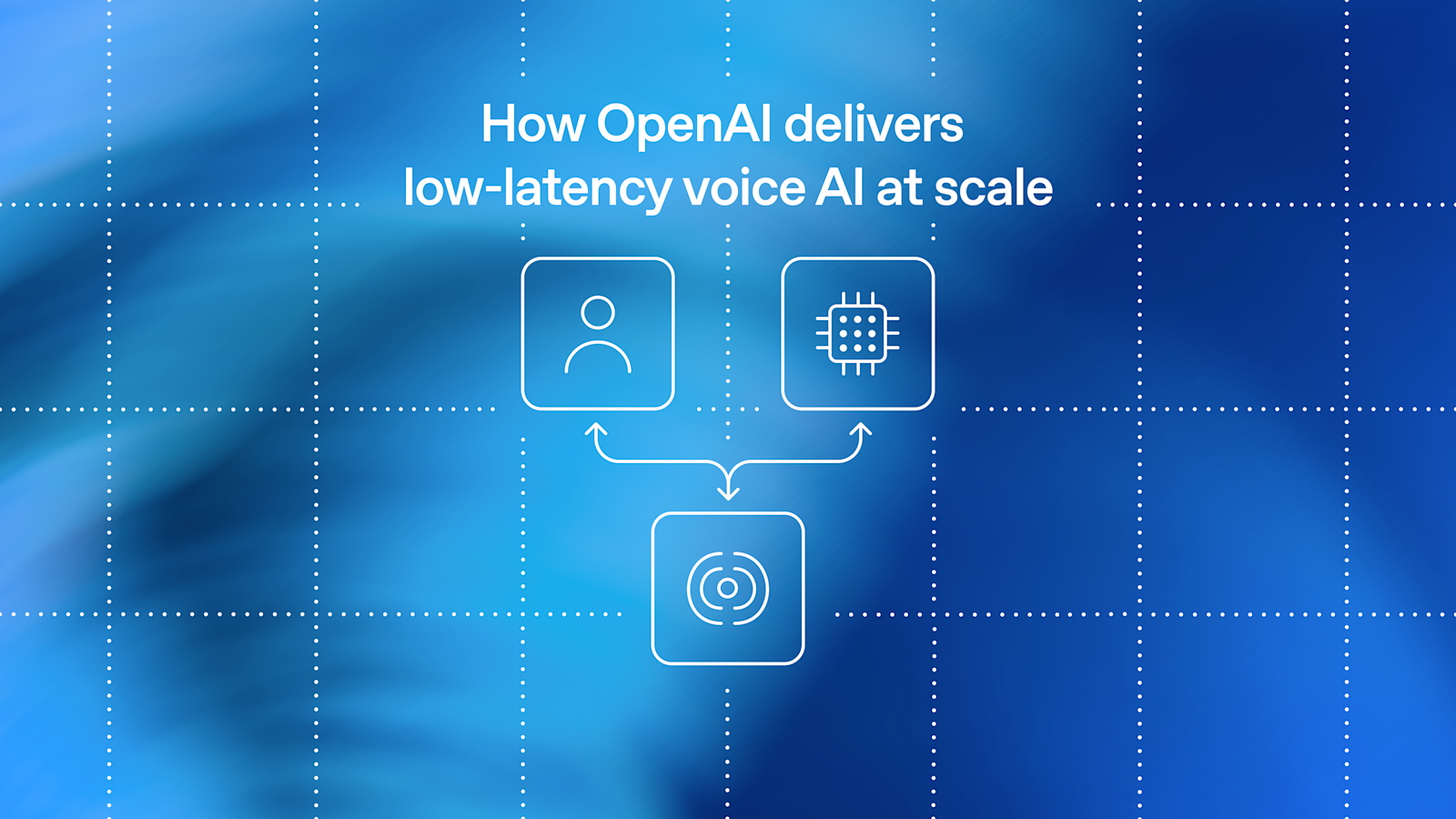

OpenAI has published a rare infrastructure-level look at how it is delivering low-latency voice AI at global scale, outlining a redesign of its WebRTC stack to support real-time speech interactions across products including ChatGPT voice, the Realtime API, and agent workflows that need to process audio while a user is still talking.

The engineering problem is straightforward to describe and difficult to solve. Spoken conversation has a much lower tolerance for delay than many other forms of software interaction. When a system hesitates, clips a user, or responds too slowly to interruption, people notice immediately. OpenAI frames the challenge around three concrete requirements: global reach for more than 900 million weekly active users, fast connection setup so users can begin speaking as soon as a session starts, and low, stable media round-trip time with minimal jitter and packet loss so turn-taking remains crisp.

Those goals help explain why the company’s latest work is focused less on model behavior alone and more on the transport systems that make speech feel immediate. In voice products, the intelligence of the model is only part of the experience. The rest depends on how fast and reliably packets move.

Why WebRTC matters for AI products

OpenAI’s post emphasizes that WebRTC remains a practical foundation for client-to-server voice AI because it standardizes difficult pieces of interactive media delivery. That includes connectivity establishment and NAT traversal through ICE, encrypted transport through DTLS and SRTP, codec negotiation, quality control via RTCP, and client-side capabilities such as echo cancellation and jitter buffering.

For a company operating across browsers, mobile apps, and server infrastructure, that standardization reduces fragmentation. Without it, each client environment would need separate solutions for connectivity, encryption, codec support, and network adaptation. By relying on a mature standard and the wider open-source WebRTC ecosystem, OpenAI says it can focus its engineering effort on the infrastructure linking real-time media streams to models rather than rebuilding the entire communications stack from scratch.

That is a practical message for the broader AI industry. Real-time AI is not just about generating audio quickly. It is about integrating established communications protocols with model-serving systems in a way that preserves familiar client behavior while changing what happens deeper in the network.

The scaling constraints that forced a redesign

According to OpenAI, its real-time AI team rearchitected the system because three constraints were beginning to collide at scale. First, one-port-per-session media termination did not fit well with OpenAI infrastructure. Second, stateful ICE and DTLS sessions required stable ownership. Third, global routing had to keep first-hop latency low.

Those are deeply operational concerns, but they point to a larger architectural transition. Early or smaller-scale real-time systems can often tolerate designs that become brittle when traffic volumes grow. What works for many sessions does not necessarily work for millions of concurrent interactions distributed across regions and network conditions.

OpenAI’s answer was what it describes as a split relay plus transceiver architecture. The key idea is to preserve standards-compliant WebRTC behavior from the client’s perspective while changing packet routing inside the company’s infrastructure. In effect, the external interface remains familiar, but the internal path becomes more adaptable to OpenAI’s scale, ownership, and routing needs.

That design choice reflects a pattern common in large infrastructure systems: avoid breaking clients if you can move complexity inward. For developers building on top of voice APIs, the appeal is obvious. Standard behavior at the edge lowers integration friction, while the service provider carries the harder burden of global media orchestration.

Latency is now a product feature

The post also underlines a shift in how voice AI should be evaluated. Latency, jitter, and packet loss are no longer background metrics reserved for network engineers. They are directly tied to product quality. Users perceive them as awkward pauses, delayed interruptions, and broken conversational rhythm.

That matters for several emerging use cases. Consumer voice assistants need to feel responsive enough to sustain natural dialogue. Developers using the Realtime API need audio sessions that start quickly and remain stable under imperfect network conditions. Interactive agents need to listen while a user is speaking, manage barge-in behavior, and respond without feeling detached from the flow of conversation.

OpenAI’s framing suggests that speech interfaces are moving into a phase where infrastructure performance becomes a differentiator. If a model is capable but the transport layer adds instability, the experience still feels poor. The consequence is that systems work on routing, session ownership, and media handling is becoming central to AI product design, not secondary to it.

What the disclosure signals

OpenAI’s decision to publish this architecture work is significant in itself. It signals that real-time voice is no longer a niche feature bolted onto text systems. It is now important enough, and large enough, to justify specialized transport engineering and public explanation. The company is effectively saying that conversational AI at global scale requires a networking stack built for speech-first interaction, not merely a powerful model behind an API.

The scale figure in the post, more than 900 million weekly active users, also provides context for why these changes matter. At that level, even small gains in connection setup or media round-trip time can affect enormous numbers of sessions. Reliability is no longer a matter of isolated user frustration; it becomes a platform-wide operating requirement.

For developers and infrastructure teams, the broader lesson is that the next stage of voice AI will be shaped by the convergence of model serving and communications engineering. Better speech interaction depends on both. OpenAI’s redesign does not just describe a faster pipeline. It outlines the growing reality that low-latency voice AI is a systems problem end to end.

If voice interfaces are to feel as immediate as human conversation, the AI industry will have to solve more than inference speed. It will have to solve the network path too. OpenAI’s WebRTC overhaul is an example of that deeper shift from demo-quality voice to production-grade conversational infrastructure.

This article is based on reporting by OpenAI. Read the original article.

Originally published on openai.com