From one image to a navigable 3D world

Nvidia researchers have unveiled Lyra 2.0, a system designed to generate large, coherent 3D environments from a single photograph. The company says the resulting scenes can be explored in real time and exported to simulation platforms such as Isaac Sim, where they can be used for robotics training.

The pitch is ambitious but well aligned with a central problem in modern AI for robotics: training agents in simulation is far easier, cheaper, and safer than training exclusively in the physical world, but useful simulation still depends on building environments that are large, stable, and realistic enough to matter. If a single image can seed a coherent scene extending tens of meters, that could materially reduce the cost of simulation content creation.

According to the report, Lyra 2.0 can generate scenes that span about 90 meters. More important than raw size, though, is the claim that the model addresses two common weaknesses in prior methods: forgetting what it has already generated and accumulating small visual errors that compound into larger distortions over time.

Why long-path 3D generation is hard

Existing AI systems for 3D scene generation often degrade as the virtual camera moves farther from its starting point. Colors drift, geometry changes, and the environment loses consistency. If the camera later returns to an area it has already seen, the model may effectively invent that location again instead of preserving continuity with the earlier view.

For robotics, those failures are not cosmetic. A simulation environment that subtly reshapes itself during exploration is a weak foundation for training embodied systems that depend on stable spatial structure. Navigation, manipulation, and planning all become less trustworthy if the world itself is not persistent.

That is why scene coherence matters more than novelty. A usable training world needs enough consistency that an agent can move through it as though it were a place, not just a stream of plausible images.

How Lyra 2.0 tries to fix the problem

The report says Lyra 2.0 stores 3D geometry for every generated frame. When the virtual camera returns toward a previously visited area, the system retrieves those earlier frames and uses their spatial information as reference material. The image synthesis is still handled by the video model, but the stored geometry is meant to preserve orientation and help maintain continuity.

This design targets the first major weakness of earlier systems: forgetting. If previously seen regions can be recalled and re-grounded through stored geometry, the generated environment has a better chance of remaining coherent over longer trajectories.

The second problem is drift, where small generation errors compound step by step. Nvidia’s answer, according to the report, is to train the model against its own flawed outputs so it learns to recognize and correct degradation instead of simply inheriting it. That is a practical strategy. Rather than pretending generation will be clean, the training process exposes the model to the noise it is likely to create.

Benchmark claims and competitive framing

Nvidia says Lyra 2.0 outperformed six competing approaches, including GEN3C, Yume-1.5, and CaM, across benchmark tests on two datasets. The report does not provide the full details of those evaluations, so the competitive claim should be read as a summary rather than a complete technical comparison. Even so, the significance is clear enough: Nvidia is presenting Lyra 2.0 not as a lab curiosity but as a state-of-the-art contender in long-range scene generation.

That framing matters because this is a crowded field. Many groups are working on image-to-3D, video world models, and simulation-friendly generative systems. To stand out, a method has to show not just appealing demos but persistent scene quality under movement.

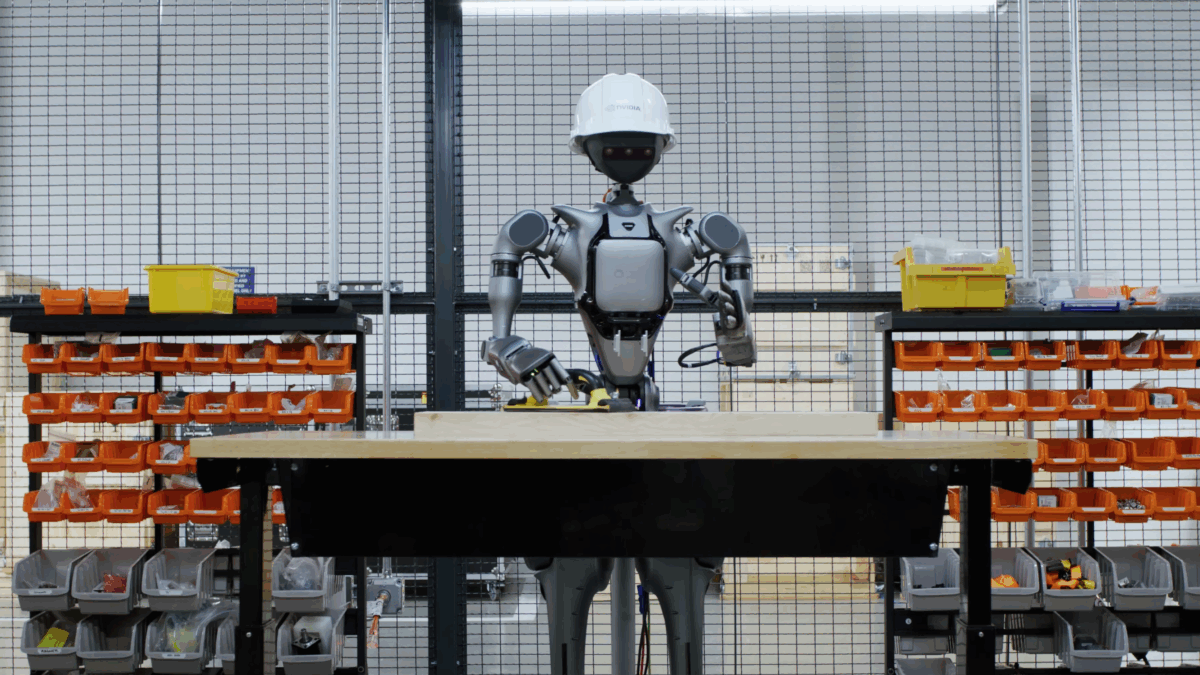

Why robotics is the immediate use case

The direct export path into physics engines such as Isaac Sim is one of the most important details in the report. It suggests Nvidia is not merely interested in content generation for visualization or virtual tours. The target is embodied AI.

Robot training often suffers from a data bottleneck. Real-world collection is expensive, and hand-building simulated environments takes time. A system that can generate plausible, explorable 3D spaces from a single photo could help scale training data faster, especially for navigation or interaction tasks where environmental diversity matters.

In practical terms, that could let developers start with sparse visual references and rapidly expand them into usable simulation scenes. The result would not replace real-world validation, but it could broaden the pretraining and testing pipeline.

What this does and does not solve

Lyra 2.0 addresses a real technical obstacle, but it should not be confused with complete physical realism. Generating a coherent scene is one thing. Generating a scene whose geometry, materials, dynamics, and object affordances are accurate enough for robust transfer to real robots is another.

That distinction matters because simulation is only valuable to the extent that behaviors learned there survive contact with reality. Even excellent visual coherence does not automatically guarantee useful physics or correct object interaction. Nvidia’s report acknowledges this indirectly by emphasizing export to physics engines, which suggests Lyra’s output is part of a broader simulation stack rather than a full solution by itself.

A step toward scalable world generation

Still, the work is notable because it moves the field toward a more scalable way of building robot training worlds. The combination of long-path coherence, explicit geometry recall, and drift-aware training addresses exactly the issues that have limited earlier systems. If those gains hold up in wider use, Lyra 2.0 could help reduce one of the hidden costs in robotics development: constructing enough worlds for robots to learn in.

That is the deeper significance. Robotics progress is not only about better policies and larger models. It is also about better environments. A robot can only learn from the worlds it sees, and generating those worlds well is becoming an increasingly important AI problem in its own right.

This article is based on reporting by The Decoder. Read the original article.

Originally published on the-decoder.com