一款可穿戴 AI 产品找到了一个不太理想的用途

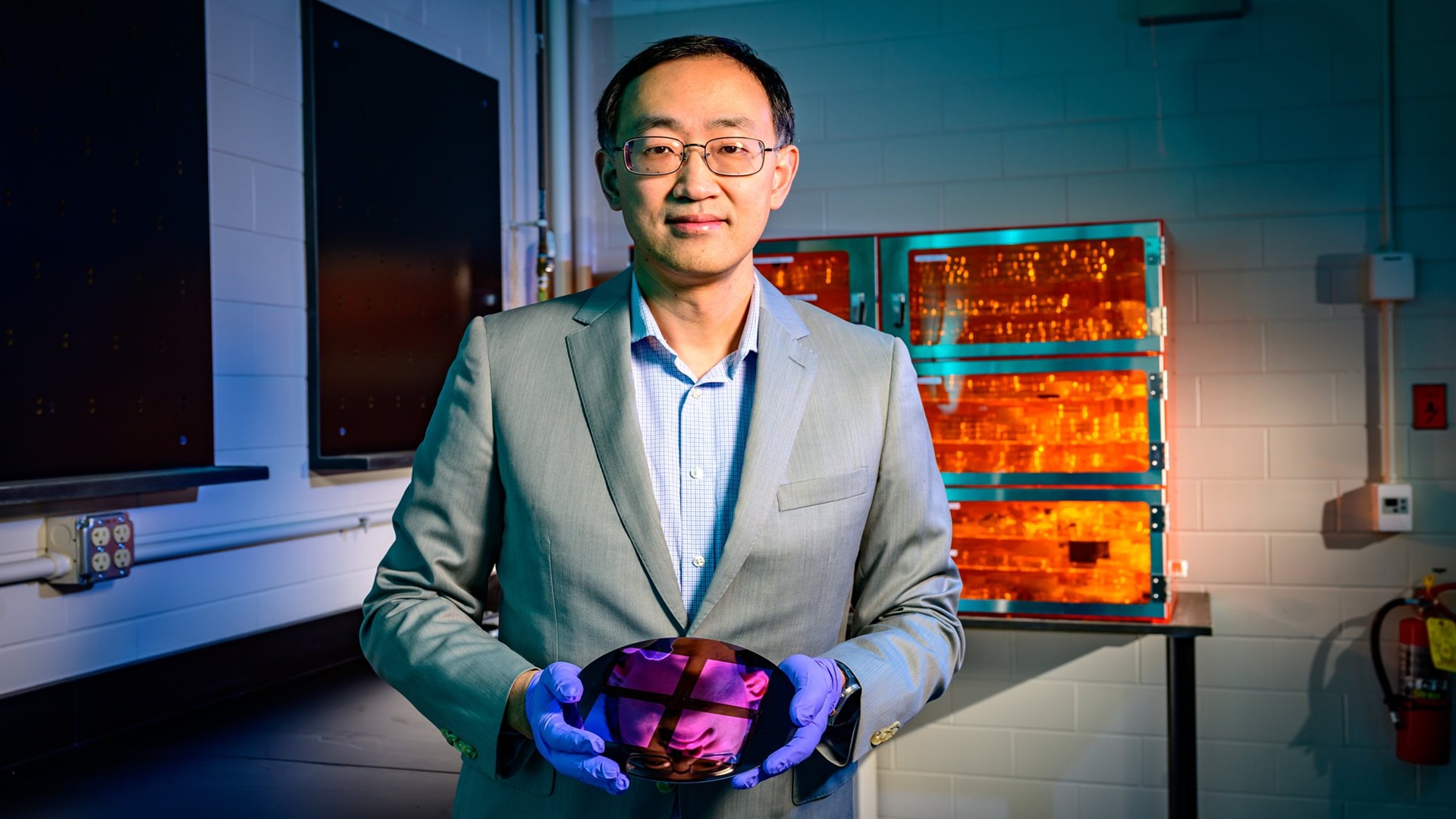

智能眼镜正越来越多地被宣传为日常生活中的便捷助手,但来自中国的报道显示,它们也正在成为考试作弊工具。根据所提供的原文,一名被确认名为 Vivian 的大学生使用 Rokid AI 眼镜扫描题目,并在内置屏幕上显示答案,随后又开始把这款设备租给同学,作为一门副业。

这一细节很好地概括了这种变化。原本可能被视为个别滥用的行为,如今看起来更像一个小型市场。在闲鱼等二手平台上,智能眼镜据称可以按天出租,价格相当于每天约 6 到 12 美元,具体取决于型号。这降低了那些不想直接购买硬件、但又想在关键考试期间使用它的学生的门槛。

为什么智能眼镜改变了作弊问题

作弊技术并不新鲜。智能眼镜带来的变化在于隐蔽性和速度。原文称,学生可以使用一个戒指形控制器以隐蔽方式操作设备,从而帮助他们回答英语和数学题。由于当前产品的外形可以与普通眼镜非常相似,教师和监考人员如果没有专门留意,就更难发现。

这种硬件的吸引力显而易见。智能眼镜承诺在几乎看起来和普通眼镜无异的同时,为用户提供免手操作的信息访问。再加上 AI、翻译、图像分析或提示响应能力,这类设备就足以削弱传统考试监管。一类原本围绕便利和辅助设计的产品,在考试场景中很快就可能变成制造不公平优势的机器。

机构开始作出反应

据报道,中国的中等教育系统已经开始禁止这类设备进入全国高考和公务员考试。这表明管理者已经意识到,这种风险出现在考试流程中最关键的环节。不过,原文也指出,许多教师还没有跟上这一趋势,这在消费级技术采用与制度回应之间制造了熟悉的滞后。

正是在这种滞后中,滥用开始扩大。当一款设备在外观上不显眼、易于获得且还能租用时,它不需要大规模普及也能造成干扰。它只需要足够多的学生证明这种方法可行。一旦如此,学校就会从普通的防作弊执法,转向一个更困难的问题:在实时环境中区分普通可穿戴设备与联网或 AI 辅助设备。

让担忧加剧的实验

所提供的文本提到一项实验,香港科技大学的研究人员将 OpenAI 的 GPT-5.2 模型接入一副 Rokid 眼镜,并让一名学生在压力很大的期末考试周佩戴它们。报道称,这名学生在一门有 100 多名学生的本科计算机通信网络课程中获得了 92.5 分的期末成绩。

这一例子本身并不能证明该技术在所有学科或所有场景下都同样有效。但它确实说明了为什么这个问题正迅速从新奇事物变成政策挑战。如果可穿戴 AI 能在真实考试表现上提供实质帮助,同时又难以被发现,那么这就不再只是一个假设性问题。

更广泛的教训令人不安,但很明确。随着 AI 设备不断缩小并融入日常物品,原本为手机和笔记本电脑设计的诚信防护体系就变得不够用了。智能眼镜对许多合法任务都很有用。但让它们在日常生活中便利的那些功能,也使其在隐藏帮助会破坏规则的场合变得极具威力。在教室里,这意味着教育者如今面对的是一种新的作弊小抄:可以戴在脸上的那种。

本文基于 Futurism 的报道。阅读原文。

Originally published on futurism.com