வட்டத்திலிருந்து வணிகத்திற்கு

Google ஆனது 2024 ஜனவரியில் Circle to Search ஐ அறிமுகப்படுத்தியபோது, இது ஒரு நீக்க முடியாத சிக்கலுக்கான ஒரு நேர்த்தியான தீர்வாக வெளிப்பட்டது: உங்கள் தொலைபேசி திரையில் நீங்கள் காணும் ஏதாவது தேட முயலும்போது ஏற்படும் உராய்வு. ஒரு படத்தின் திரைப்படம் எடுத்து, உலாவிக்கு மாறி, Google Lens ஐ திறந்து, திரைப்படத்தை பதிவேற்றுவதற்கு பதிலாக, Circle to Search ஆனது பயனர்களை அவர்கள் இருந்த பயன்பாட்டை விட்டுவிடாமலேயே முகப்பு பொத்தானை நீண்ட-அழுத்தம் செய்து எதையாவது சுற்றி வரைய அனுமதித்தது — ஒரு உரை சரிப்பு, ஒரு படம், ஒரு பொருள், ஒரு முகம் — அவர்கள் தேட விரும்பினர். இந்த அம்சம் இப்போது பல்லாயிரம் Android சாதனங்களுக்கு விரிவுபடுத்தப்பட்டுள்ளது மற்றும் Google ஆனது கடந்த இரண்டு ஆண்டுகளில் வெளியிட்ட மிகவும் வெற்றிகரமான AI-இயக்கப்பட்ட அம்சங்களில் ஒன்று என்று குறிப்பிடப்பட்டுள்ளது.

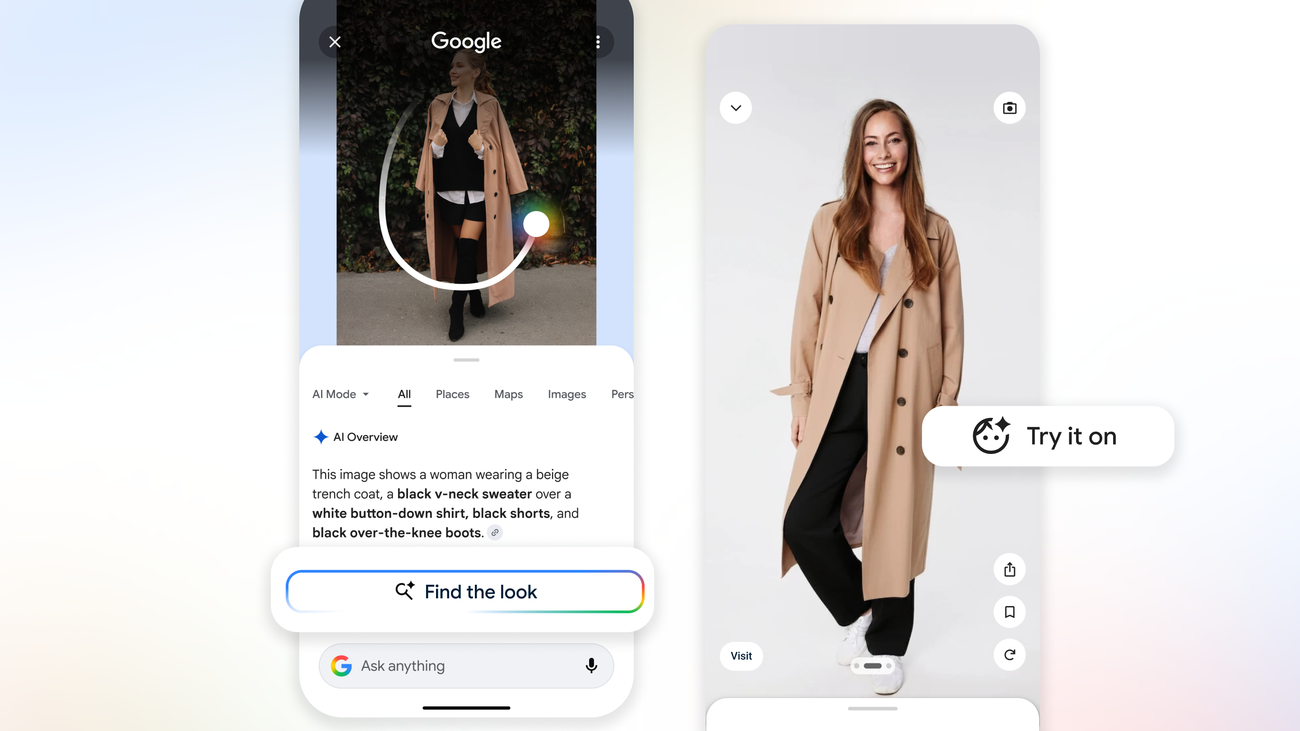

இப்போது Google ஆனது Circle to Search இன் திறன்களை ஆழப்படுத்துகிறது, இது அதன் பயன்பாட்டை கணிசமாக விரிவுபடுத்தும் மற்றும் அதன் வணிக சாத்தியத்தையும் விரிவுபடுத்தும் திசையில். புதிய புதுப்பிப்புகளின் தொகுப்பு Google ஆனது visual intelligence அம்சங்கள் என்று அழைக்கிறது: குறிப்பிட்ட ஆடைப் பொருட்கள், வீட்டு அலங்கார பொருட்கள், மற்றும் நுகர் பொருட்களை படங்களுக்குள் இருந்து அங்கீகரிக்க மற்றும் தேட முடியும் திறன் — மற்றும் பயனர்களை அந்த பொருட்கள் எங்கு விற்கப்படுகின்றன, என்ன விலையில், மற்றும் என்ன கட்டமைப்பில் கண்டறிய அனுமதிக்கும் கடை முடிவுகளை மேல்நோக்குக. Visual தேடலில் முழு படத்தைப் பார்க்க முடியும் திறனுடன் இணைந்து — ஒரு காட்சியில் பொருட்களுக்கு இடையே இடஞ்சார்ந்த மற்றும் சூழல்சார்ந்த உறவுகளைப் புரிந்துகொள்வது — புதுப்பிப்புகள் Circle to Search என்ன செய்ய முடியும் என்பதற்கான குறிப்பிடத்தக்க விரிவாக்கத்தை பிரதிநிதித்துவம் செய்கின்றன.

ஆடை தேடல்: முன்னணி பயன்பாடு வழக்கு

ஆடைப் பொருட்களை அடையாளம் காணும் திறன் புதிய அம்சங்களின் மிக உடனடியாக பயனரை நோக்கிய ஆகும். இதைப் பயன்படுத்தி, ஒரு பயனர் Instagram பிரকாশனத்தில், ஒரு Pinterest핀ல், ஒரு வலைத்தள படத்தில், அல்லது அவர்கள் தங்கள் கேமராவுடன் எடுத்த ஒரு படத்தில் ஆடையின் ஒரு பகுதியைச் சுற்றி வரையலாம், மற்றும் குறிப்பிட்ட பொருளை அடையாளம் காணும் முடிவுகளைப் பெற முடியும் (இது ஒரு அங்கீகாரமான பொருளாக இருக்கும்போது), பல சில்லறை விற்பனையாளர்களிலிருந்து பார்வைக்கு ஒத்த பொருட்கள், மற்றும் தற்போதைய விலை மற்றும் கிடைக்கும் தன்மை பற்றிய தகவல். இந்த அமைப்பு Google இன் visual embedding மாதிரிகளைப் பயன்படுத்துகிறது — Google Lens இன் பொருட்களின் தேடல் அடிப்படையான அதே தொழில்நுட்பம் — ஆனால் Circle to Search இடைமுகத்தில் பூர்வீகமாக ஒருங்கிணைக்கப்பட்ட மற்றும் பகுதிப் பார்வைகள், மாறுபட்ட ஒளி நிலைமைகள், மற்றும் பகுதியளவு தெரியாத பொருட்களைச் சமாளிக்க விரிவுபடுத்தப்பட்டுள்ளது.

நடைமுறைச் பயன்பாடு ஆடைப் பொருட்களைப் பற்றி சிந்திக்கும் நுகர்வோர் உடனடியாக அங்கீகரிப்பார்கள்: யாரோ ஒருவர் அணிந்திருக்கும் ஏதாவது பார்த்து, அதைக் கண்டுபிடிக்க அல்லது ஏதாவது ஒத்த ஒன்றைக் கண்டுபிடிக்க விரும்பி, வரைவாக உரையில் விவரிக்கும் சிரமகரமான செயல்முறையை எதிர்கொள்ள வேண்டும். ஆடைக்கான Circle-to-search அந்த உராய்வை முழுவதுமாக குறைக்கிறது. அடையாளம் காணுதலின் துல்லியம் பொருள் எவ்வளவு தனித்துவமாக இருக்கிறது என்பதைப் பொறுத்து மாறுபடுகிறது — அங்கீகாரமான பிராண்டிங் அல்லது விவரங்களுடன் ஒரு குறிப்பிட்ட டிசைனர் பகுதி ஒரு பொதுவான திடமான-வண்ணத்தின் டி-ஷர்ட்டை விட மிக எளிதாக அடையாளம் காணப்படுகிறது — ஆனால் பில்லியன் கணக்கான பொருட்களின் படங்களிலுள்ள Google இன் விரிவான பயிற்சி தரவு கணினியை ஒரு பரந்த அங்கீகாரத் தளம் கொடுக்கிறது.

வீட்டு அலங்கார மற்றும் பொருள் அங்கீகாரம்

அதே visual அங்கீகார திறன்கள் வீட்டு அலங்கார மற்றும் நுகர் எலக்ட்ரனிக்ஸுக்கு நீட்டிக்கப்படுகின்றன, பயனர்கள் படங்களில் அடிக்கடி பொருட்களை சந்திக்கும் வகைகள் — தலையங்கு உள்ளடக்கம், சமூக ஊடக பதிவுகள், ரியல் எஸ்டேட் பட்டியல் — மற்றும் அவற்றை ক্রய়ের জন்য கண்டுபிடிக்க விரும்புகின்றனர். ஒரு குறிப்பிட்ட விளக்கை, ஒரு குறிப்பிட்ட கம்பளி வடிவத்தை, அல்லது ஒரு அறை புகைப்படத்திலிருந்து தொலைக்காட்சியின் ஒரு மாதிரியை அடையாளம் காணுவது வரலாற்று ரீதியாக படத் தேடல் அமைப்புகளுக்கான ஒரு சிரமகரமான பிரச்சனையாக இருந்துள்ளது, ஏனெனில் இந்த பொருட்கள் பெரும்பாலும் கோணங்களில் தோன்றுகின்றன, மாறுபட்ட ஒளி நிலைமைகளின் கீழ், மற்றும் சரியான அடையாளம் காணுதல் சிரமாக்கும் பகுதிப் பார்வைகளில்.

Google இன் புதுப்பிக்கப்பட்ட மாதிரிகள் ஒரு தனிமைப்படுத்தப்பட்ட பொருள் படமாக பொருத்த முயற்சிக்கும் பதிலாக, காட்சி சூழலுக்குள் பொருள் பற்றியான நியாயத்தின் மூலம் இந்த சூழ்நிலைகளை மிகவும் நேர்த்தியாக சமாளிக்கின்றன. அமைப்பு அறை புகைப்படத்தின் பின்னணியில் ஒரு பொருள் மாற்றாக கால்நடை அல்லது அலங்கார இருக்கக்கூடும், அங்கீகார செயல்முறையில் அந்த முன்னணியை கொண்டு வரை, மற்றும் கண்ணோட்ட கோணம் மற்றும் ஒளி நிலைமைகள் கணக்கில் எடுக்கும் முடிவுகளை மேல்நோக்குக சரியான அடையாளம் காணுதலுக்கு ஒரு தெளிவான பட்டியல்-பாணி படம் தேவை பதிலாக.

வணிக பரிமாணம்

இந்த புதுப்பிப்புகளை அவற்றின் வணிக பரிமாணத்தை ஒப்புக்கொள்ளாமல் பகுப்பாய்வு செய்வது அப்பாவி இருக்கும். Google இன் মূல விளம்பர ব்যবসा பயனர் நோக்கத்தை வணிக வாய்ப்புகளுடன் இணைப்பதைப் பொறுத்து இருக்கிறது, மற்றும் visual தேடல் அந்த இணைப்புக்கு ஒரு பெரிய பயன்படுத்தப்படாத மேற்பரப்பை பிரதிநிதித்துவம் செய்கிறது. ஒரு பயனர் ஒரு படத்தில் ஒரு பொருளைச் சுற்றி வரையும் போது, அது ஒரு ক্রয় நோக்கத்தின் ஒரு வெளிப்பாடு, அது பெரும்பாலான வரைவாக தேடல்களை விட மிக குறிப்பிட்ட மற்றும் செயற்படுத்தக்கூடியது. அந்த நோக்கம் থেকে கடை முடிவுகளை உடனடியாக மேல்நோக்குக முடியும் — மற்றும் பயனர்கள் ஏற்கனவே ஆண்டுளள பயன்பாடுகளுக்குள் அப்படி செய்ய, அவர்களை Google க்கு நேவிகேட் செய்ய தேவை பதிலாக — விளம்பரம் மற்றும் வணிக கண்ணோட்டத்திலிருந்து அয்யமாக மூல்யமிக்கது.

Google Shopping பல ஆண்டுகளாக ஒரு குறிப்பிடத்தக்க வருமான பங்களிப்பாளராக இருந்துள்ளது, மற்றும் Circle to Search ஐ வாங்கல் முடிவுகளுடன் ஒருங்கிணைப்பது அடிப்படையாக ஒரு Android சாதனத்தில் எந்த படத்தை ஒரு சாத்தியமான வணிக தொடர்பு புள்ளியாக மாற்றுகிறது. நிறுவனம் இதை ஒரு பயனர் நன்மை ஆக — நீங்கள் விரும்பியதை எளிதாக கண்டுபிடிக்க — மற்றும் பெரும்பாலான பயன்பாடுகளுக்கு, அந்த சட்டமாக்கல் சரியாக இருக்கிறது. ஆனால் பயனர் சুবிதை மற்றும் Google இன் வணிக நலன்கள் மிடையிலான சரিப்பு அযக்க இல்லை, மற்றும் visual AI மேம்பাடுகள் மிக பெரிய அளவில் வணிகத்தை இயக்கக்கூடிய மற்றும் Google இன் பொருள் ঘোஷணைகளில் மிக প்রமுகமான இடத்தை பெறுவது குறிப்பிடுவது மூல்யமாக இருக்கிறது.

எதிர்காலத்தை பார்க்கிறது

Circle to Search புதுப்பிப்புகள் Google இன் on-device AI திறன்களின் விரிவான பரিணாமத்தின் பாகம். Gemini Nano மற்றும் தொடர்புடைய மாதிரிகள் மொபைல் வன்பொருளில் நேரடியாக வேலை செய்ய சக்திமாய் மாதிரிகளாக வரும்போது, முன்னர் Google இன் சேவையகங்களுக்கு தரவு அனுப்ப வேண்டிய பொருள்கள் இப்போது உள்ளூரில் செயல்படுத்தப்படலாம், தாமதம் மற்றும் தனிமையின் জন்য உணர்வுகளுடன். Google ஆனது Circle to Search visual செயல்முறையின் சில பாகங்கள் on-device செயல்பாட்டின் திசையில் நகரும் என்று சுட்டிக்காட்டியுள்ளது, மாதிரி திறன் மேம்பட்ட போது, இது அம்சத்தை ஆப்-லைனுக்கு வேலை செய்ய முடியும் மற்றும் visual தேடல்களுடன் தொடர்புடைய தரவு பெயர்வை குறைக்கும். இப்போதுக்கு, மேல்-சாதனம் மற்றும் on-device செயல்பாட்டின் கலவை Circle to Search ஐ Google இன் பயிற்சி தரவு மற்றும் கள்களாதனத்தின் அளவிற்கு அணுகல் இல்லாமல் கொள்ளக்கூடிய ஒரு திறன் சுயவிவரம் கொடுக்கிறது.

இந்த கட்டுரை Google AI Blog இன் அறிக்கையிலிருந்து அடிப்படையாக இருக்கிறது. அசல் கட்டுரை படிக்கவும்.

Originally published on blog.google