A long-running cosmology problem remains unresolved

One of modern cosmology’s most persistent tensions has survived another major test. According to a new report highlighted by Live Science, researchers have combined decades of data into what the article describes as the most thorough dataset yet, and the result still does not reconcile competing measurements of how fast the universe is expanding.

The issue is often framed around the cosmic distance ladder and other methods used to infer the Hubble constant, the value that describes the universe’s expansion rate. In principle, different measurement strategies should converge on the same answer within the standard cosmological model. In practice, they have not. The disagreement has become one of the field’s defining puzzles.

The new study does not appear to resolve that tension. Instead, the report says it reinforces the idea that something is missing from the current picture. That conclusion is important because it pushes the discussion beyond the possibility that the discrepancy is merely a statistical fluke or an artifact of limited data.

Why this mismatch matters

At stake is more than a single number. If the universe’s expansion rate cannot be consistently derived from different observational routes, then either one or more measurements contains an unrecognized problem or the standard model of cosmology is incomplete in a meaningful way.

The supplied article text describes this as a “central crisis in cosmology,” and that framing captures why the issue has drawn so much attention. Cosmology depends on connecting early-universe physics, large-scale structure, and nearby observations into one coherent account. When those pieces stop lining up, the stress falls on the whole framework, not just on one niche subfield.

A persistent mismatch can point to new physics, overlooked systematics, or both. The present report, based on the summary provided, strengthens the case that researchers are not simply dealing with a temporary measurement nuisance.

The role of the distance ladder

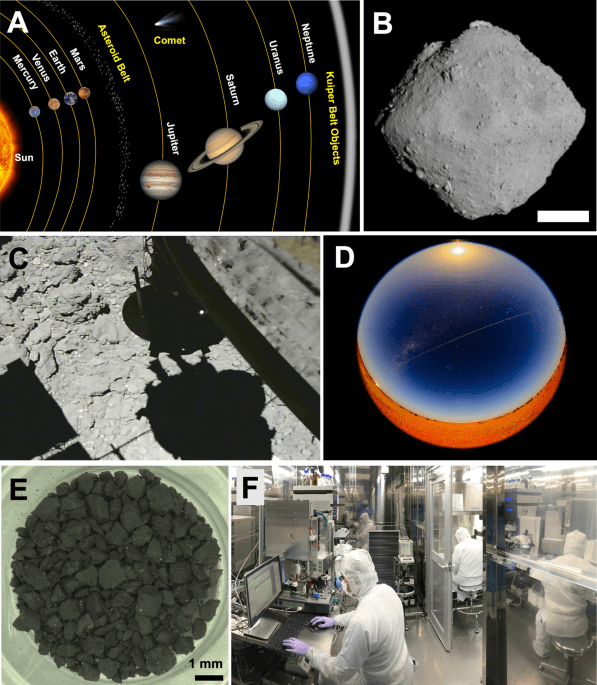

The article references an illustration of the cosmic distance ladder, a foundational method for estimating the expansion rate. That ladder works by linking different classes of astronomical objects and measurements across distances, creating a chain from nearby calibrators to far more distant markers. It is one of the classic tools of observational cosmology.

Because the distance ladder involves several steps, critics have long asked whether the tension could emerge from calibration issues or hidden biases accumulating along the way. But the significance of a comprehensive new synthesis is that it can test whether the mismatch persists even after many datasets and refinements are brought together.

According to the report, it does. That does not automatically prove the standard model is wrong, but it does make the “something’s missing” interpretation harder to dismiss.

From anomaly to research agenda

Scientific anomalies are common; not all of them lead to new theory. What turns an anomaly into a serious research agenda is persistence. By that standard, the expansion-rate tension has earned its status. Repeated efforts to reduce uncertainties and cross-check methods have not erased the discrepancy.

The newly reported dataset appears to continue that trend rather than reverse it. That is why the result matters even though it is, in one sense, a non-resolution. Science advances not only when a mystery is solved but also when the set of plausible easy explanations narrows. A stronger dataset that still refuses to fit the model is a form of progress because it sharpens the problem.

Researchers can then focus on a more targeted set of possibilities, whether those involve hidden observational biases, refinements to cosmic evolution, or genuinely new ingredients in the theory.

Why caution is still warranted

The supplied text supports a broad conclusion but not every detail of the underlying study. It tells us that a comprehensive analysis combining decades of work still finds that the standard model cannot fully account for the observed expansion-rate discrepancy. It does not, from the excerpt provided, specify the exact datasets, numerical results, or favored explanation.

That means the safest reading is not that cosmology has been overturned, but that the pressure on the standard picture remains real and possibly growing. In frontier science, that distinction matters. Strong evidence of incompleteness is not the same as a definitive replacement theory.

The significance of an enduring mismatch

Even with that caution, the takeaway is substantial. A mature field has spent years improving measurements, comparing techniques, and testing whether the disagreement could be explained away. The fact that a more comprehensive dataset still leaves the problem standing implies that the universe is not yet done surprising us.

For cosmologists, this is both frustrating and productive. Frustrating, because one of the discipline’s central parameters remains unsettled. Productive, because unresolved tensions are often where the next major advances begin. If there is an essential component missing from the current model, then the expansion problem may be one of the clearest places to look for it.

That is why this result resonates beyond astronomy headlines. It suggests that even after decades of precision cosmology, the deepest large-scale story about how the universe evolves may still be incomplete.

This article is based on reporting by Live Science. Read the original article.

Originally published on livescience.com