Recall’s Security Story Just Got More Complicated Again

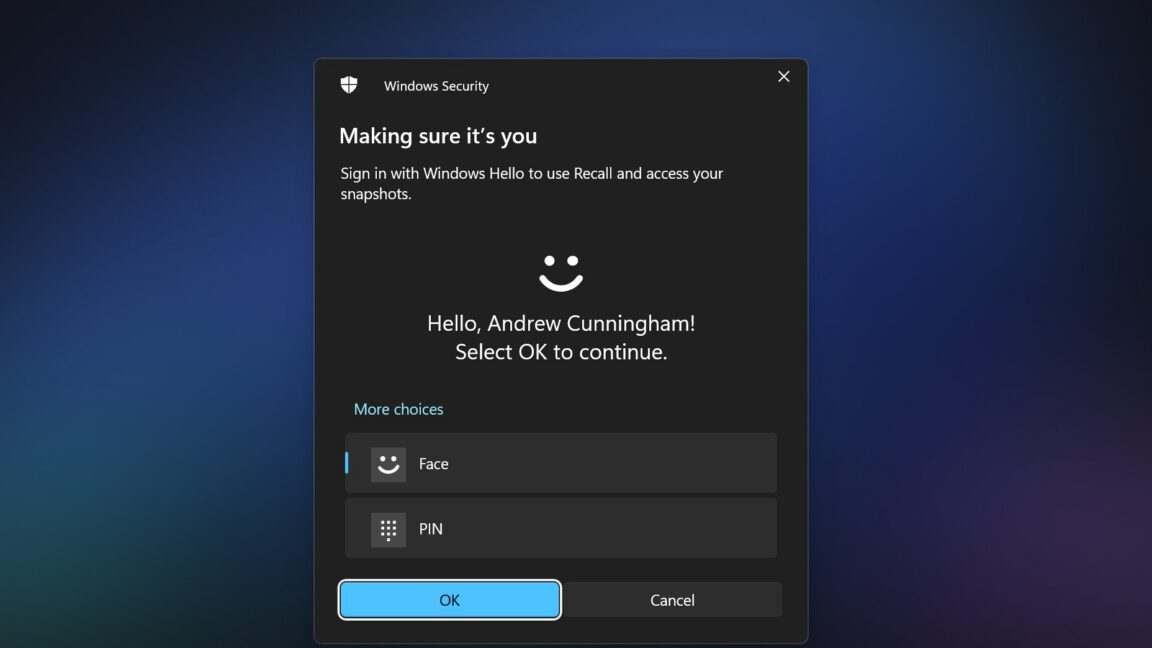

Microsoft’s Recall feature was already one of the most controversial ideas in the early Copilot+ PC era. It promised an AI-assisted memory layer that could track a user’s activity through screenshots and searchable history, but its first implementation stored highly sensitive material in unencrypted local files. After heavy criticism from journalists and security researchers, Microsoft delayed the rollout, rebuilt key protections, encrypted the stored data, improved filtering for sensitive content, and made the feature opt-in rather than universally enabled.

That overhaul appeared to answer the most immediate criticism. But a newly updated tool, TotalRecall Reloaded, now points to a different class of risk. According to the supplied source text, the issue is not a direct break of the protected Recall database itself. Instead, the problem emerges after a user authenticates with Windows Hello and Recall data is passed to another system process, AIXHost.exe, which does not benefit from the same protections. The researcher behind the tool, Alexander Hagenah, summarized the problem with a metaphor: the vault may be strong, but the delivery path is not.

The Weakness Is in the Workflow, Not the Storage Layer

That distinction is important because it changes the debate. Microsoft’s response to the first Recall backlash focused heavily on storage security: encryption, authentication, better exclusion of sensitive material, and default-off behavior. Those measures matter. But the new finding suggests that securing an AI feature cannot stop at how data sits at rest. It also has to cover how data moves through the system during ordinary use.

In other words, Recall may be harder to loot from disk than it was before, yet still vulnerable at the point where protected data becomes usable. That is a familiar problem in security engineering. Systems often look strongest in static architecture diagrams and weakest in operational transitions: authentication, decryption, inter-process communication, and temporary access states. For a feature built around capturing large amounts of personal computing history, those transition points are exactly where the risk profile becomes most sensitive.

The source text also makes clear why Recall remains uniquely controversial even after Microsoft improved it. This is not just another application cache or browser history. It is a system designed to record broad swaths of PC activity in order to make that activity retrievable later. Even a partial compromise can therefore expose far more context than users would expect to leak through a single weak link.

Why This Matters Beyond Recall

The Recall episode is becoming a case study for a wider industry problem: AI features that feel convenient at the product layer often accumulate risk at the systems layer. The promise is straightforward enough. Local AI, supported by NPUs, should allow sensitive features to stay on-device rather than in a remote cloud. But local processing does not automatically mean local safety. It means the threat model shifts. Attackers no longer need to intercept cloud traffic if they can target the endpoint, the user session, or the software path that transports decrypted information after authentication.

That dynamic is likely to recur as operating systems absorb more model-driven features. Search, summarization, activity history, predictive assistance, and agentic tooling all require some combination of data collection, interpretation, and retrieval. If those systems are going to be trusted, vendors will need to secure the full lifecycle of access, not merely the database at rest.

The updated Recall criticism does not erase Microsoft’s earlier improvements. By the source’s own framing, the database protections are much stronger than before. But it does reinforce a harder truth: when a feature is designed to remember nearly everything, the tolerance for implementation gaps is extremely low. Each new workaround or process weakness revives the same underlying concern from a different angle.

- Microsoft previously overhauled Recall after its original storage approach was found insecure.

- The new tool reportedly targets the point after Windows Hello authentication, when Recall data is handed to AIXHost.exe.

- The incident underscores how AI features can remain risky even after stronger encryption and opt-in controls are added.

Recall may yet become a stable part of Windows. But if it does, it will be because Microsoft proves not only that the memory is protected, but that every step required to access that memory is protected too.

This article is based on reporting by Ars Technica. Read the original article.

Originally published on arstechnica.com