What if the heat electronics usually try to get rid of could do useful work?

That is the premise behind a new analog computing approach reported by a team led by researchers at MIT’s Institute for Soldier Nanotechnologies. Instead of treating waste heat as an unwanted byproduct, the researchers used it as the information carrier itself.

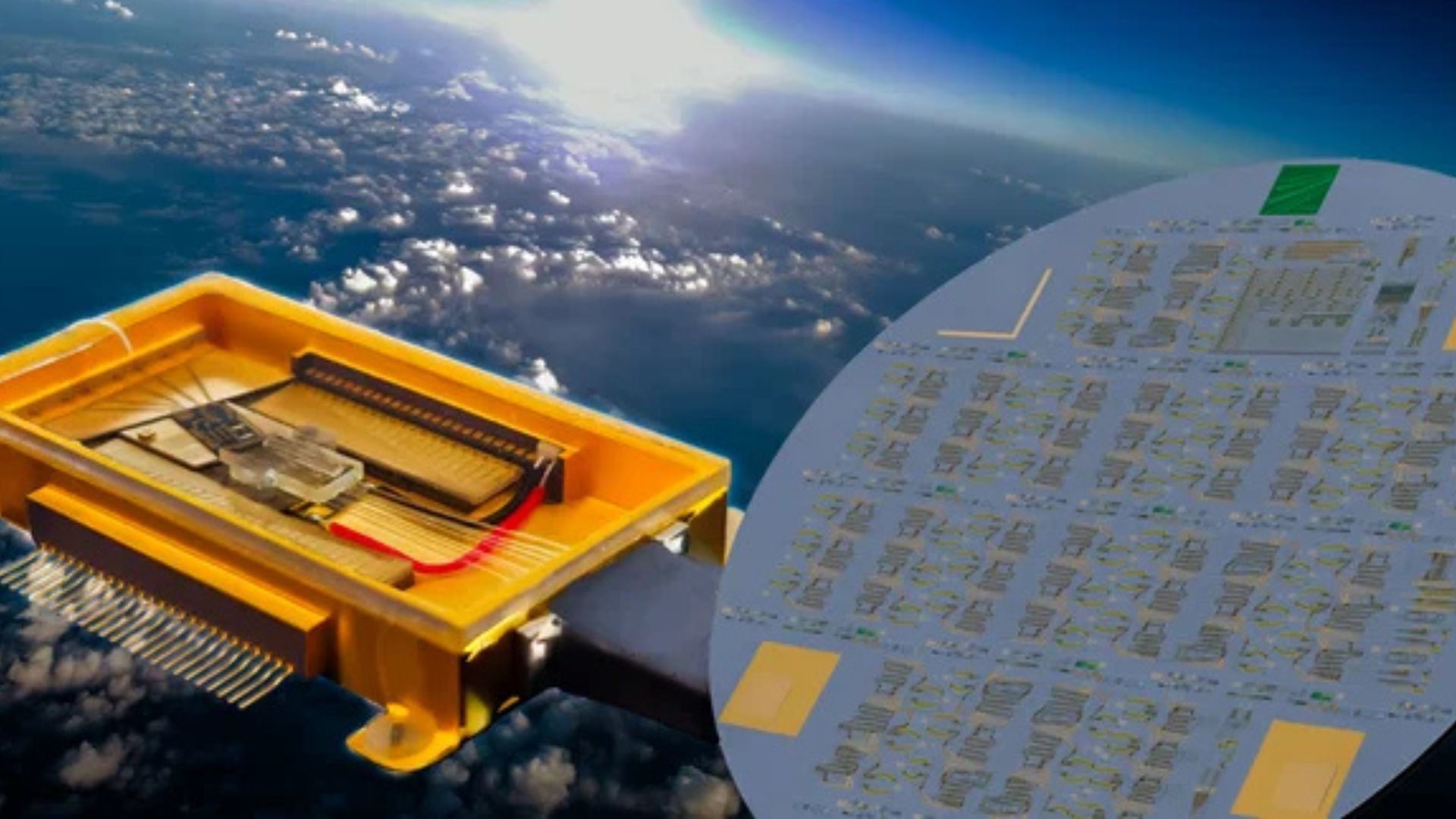

In the system described in the source report, input data is not encoded as electrical binary values. It is represented as a set of temperatures based on heat already present in a device. That thermal information moves through microscopic silicon structures whose geometry is designed by a physics-based optimization algorithm. The resulting distribution and flow of heat performs the calculation, while the output is represented by the power collected at the other end.

It is a striking inversion of conventional logic. Most modern computing systems work electrically and then struggle with the heat they produce. This work asks whether some classes of computation might instead piggyback on that heat, potentially reducing the need for extra energy input in specific applications.

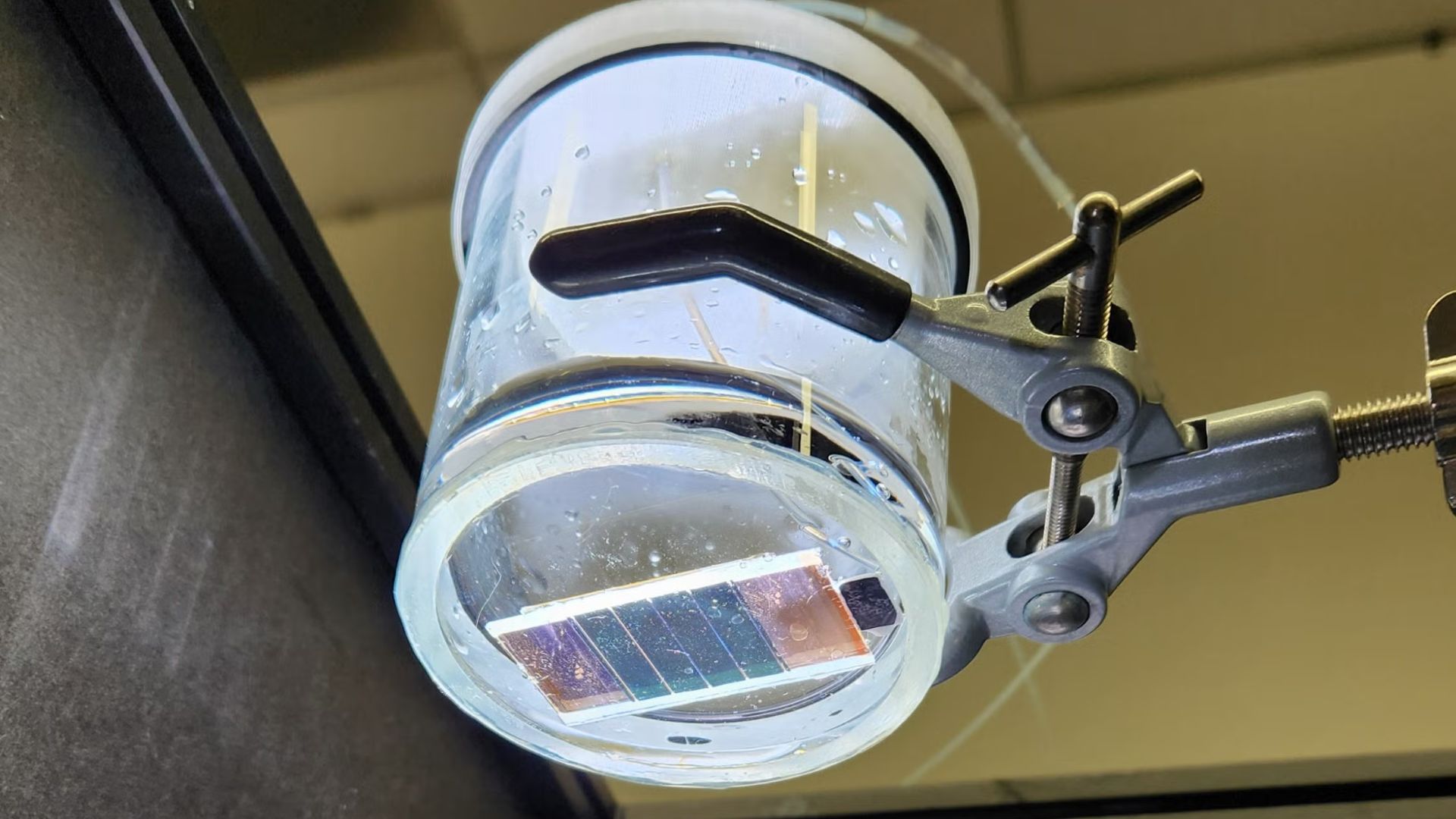

The researchers demonstrated a core operation used in machine learning

The team used the silicon structures to carry out a simple form of matrix-vector multiplication, a mathematical operation that sits at the core of machine-learning systems, including large language models. According to the source text, the results were more than 99 percent accurate in many cases.

That accuracy is noteworthy because matrix operations are exactly the sort of repetitive linear algebra that dominates many AI workloads. In principle, any new method that can perform them efficiently attracts attention. But the researchers are careful not to overstate what they have built.

The source report makes clear that the technique is far from ready to scale into the kind of enormous systems used in modern deep learning. Tiling millions of these thermal structures together would present substantial engineering challenges. Accuracy also declines as the matrices become more complicated and as the distance between input and output terminals grows.

So this is not a near-term replacement for digital AI accelerators. It is better understood as a demonstration that thermal analog computation can be made real and accurate under constrained conditions.

Why analog heat-based computing is interesting at all

The appeal lies in energy logic. If a device is already generating heat, and if that heat can be harnessed to perform sensing or signal-processing tasks, then part of the computational burden may be shifted onto an existing physical byproduct rather than requiring additional electrical work.

That could matter in edge devices, embedded electronics, and systems where thermal management is already a major design concern. Instead of adding extra circuitry to measure or process temperature-related information, a chip might use heat flow directly as the operating substrate for certain tasks.

The source report highlights one especially immediate possibility: thermal sensing. The researchers say the technique could help detect problematic heat sources and measure temperature changes in electronics without consuming extra energy. It could also reduce the need for multiple temperature sensors that occupy valuable chip area.

That may prove to be the more realistic first application. Revolutionary computing paradigms often find their earliest value not by replacing mainstream processors, but by solving a narrower and more urgent problem better than existing tools.

A different view of heat inside electronics

Modern chip design usually treats heat as an engineering constraint. Excess heat degrades performance, shortens component life, and imposes cooling costs. The dominant goal is therefore to minimize it, move it, or dissipate it.

This research adopts the opposite stance. Lead author Caio Silva, cited in the source text, notes that heat is normally the waste product of electronic computation. Here, the team uses heat as information itself.

That shift is conceptually important. It suggests that thermal behavior inside devices is not only a problem to manage but also a resource that can be shaped. The silicon structures are not generic channels. They are optimized geometries designed so that thermal flow implements a desired transformation.

In effect, the material layout becomes part of the computation. Once fabricated, the structure physically constrains how heat spreads, allowing the device to solve a prescribed operation through its own thermodynamics.

The limits are real, but so is the opportunity

Many experimental computing ideas stumble on the gap between a clever proof of concept and a manufacturable, scalable platform. This work plainly faces that gap. The source report notes problems with scaling, complexity, and distance-related loss of accuracy. Those are not peripheral details. They define the difference between a laboratory result and a commercially viable architecture.

Still, the research has several qualities that make it worth watching. First, it demonstrates high accuracy in at least some matrix operations. Second, it relies on microscopic silicon structures, meaning it is grounded in materials and fabrication approaches already familiar to the semiconductor world. Third, it targets a growing bottleneck: how to sense, manage, and possibly exploit the thermal behavior of increasingly dense electronics.

Even if heat-based analog computing never becomes a general-purpose AI engine, it could carve out a role in co-processors, on-chip diagnostics, or specialized low-energy signal-processing functions.

Why this matters in the broader computing landscape

The significance of the work is less about replacing digital computing than about broadening the menu of what counts as computation. As AI and other data-intensive workloads drive up energy demand, researchers are revisiting analog, photonic, neuromorphic, and other unconventional architectures in search of efficiency gains.

This MIT-led effort fits squarely into that trend. It proposes that thermal energy, usually treated as loss, might be partially reclaimed as function. In an era when every watt on a chip matters, that idea has practical as well as philosophical appeal.

The source report does not promise a near-future processor that runs giant language models on waste heat alone, and it should not be read that way. What it does offer is a credible proof that heat can be encoded, directed, and interpreted as information with high accuracy in selected tasks.

That may be enough to open a new line of research. Computing history is full of examples where an initially narrow technique became valuable because it solved one difficult problem unusually well. Waste-heat computing may follow that path. Its first impact could come not from replacing the processor, but from turning the chip’s hottest liability into a new tool.

This article is based on reporting by MIT Technology Review. Read the original article.

Originally published on technologyreview.com