क्या होगा अगर इलेक्ट्रॉनिक्स जिस गर्मी से छुटकारा पाने की कोशिश करते हैं, वही उपयोगी काम कर सके?

यही विचार MIT के Institute for Soldier Nanotechnologies के शोधकर्ताओं के नेतृत्व वाली एक टीम द्वारा प्रस्तुत एक नई एनालॉग कंप्यूटिंग पद्धति के पीछे है। अपशिष्ट ऊष्मा को अवांछित उपोत्पाद मानने के बजाय, शोधकर्ताओं ने उसे ही सूचना वाहक के रूप में इस्तेमाल किया।

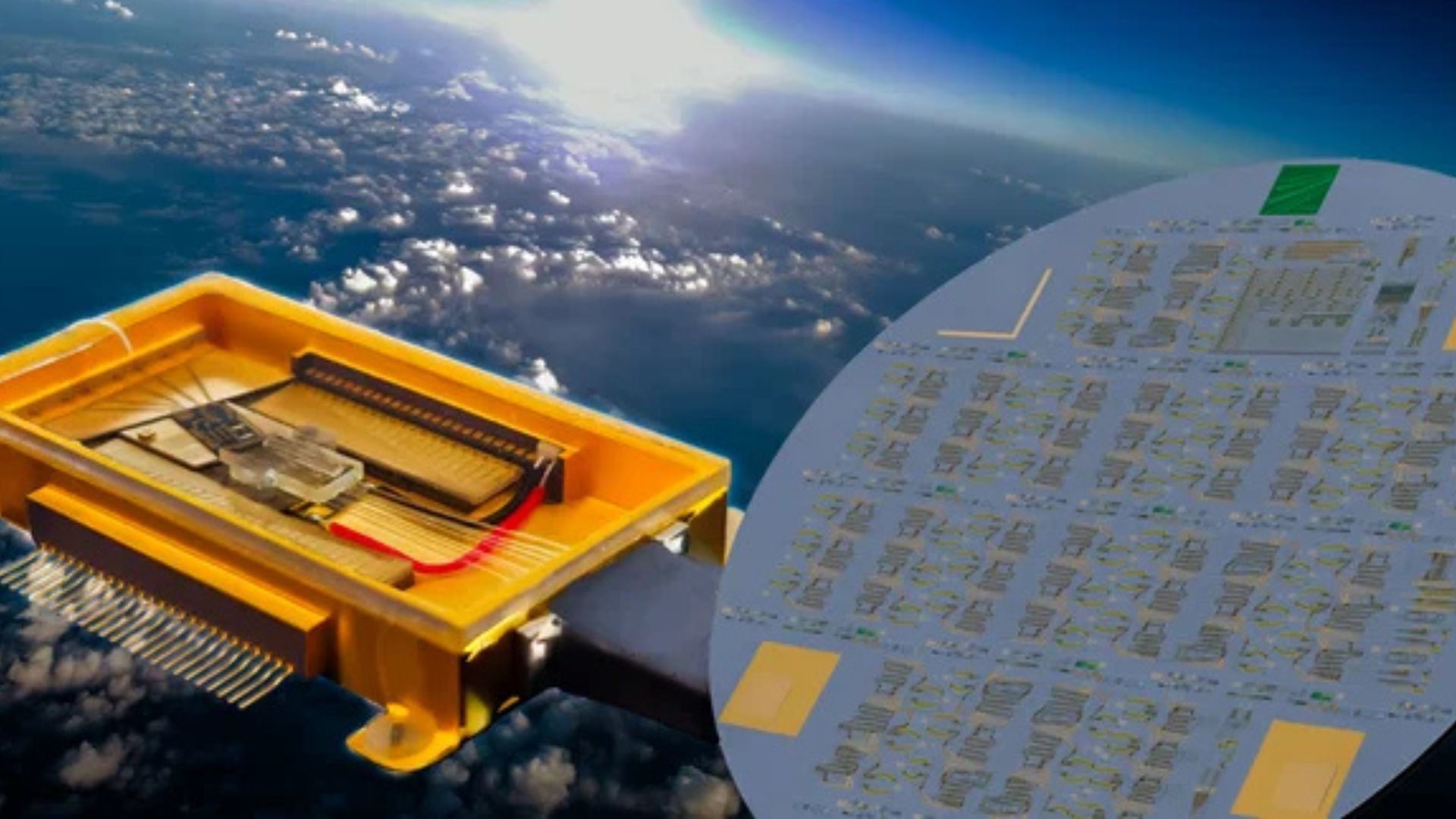

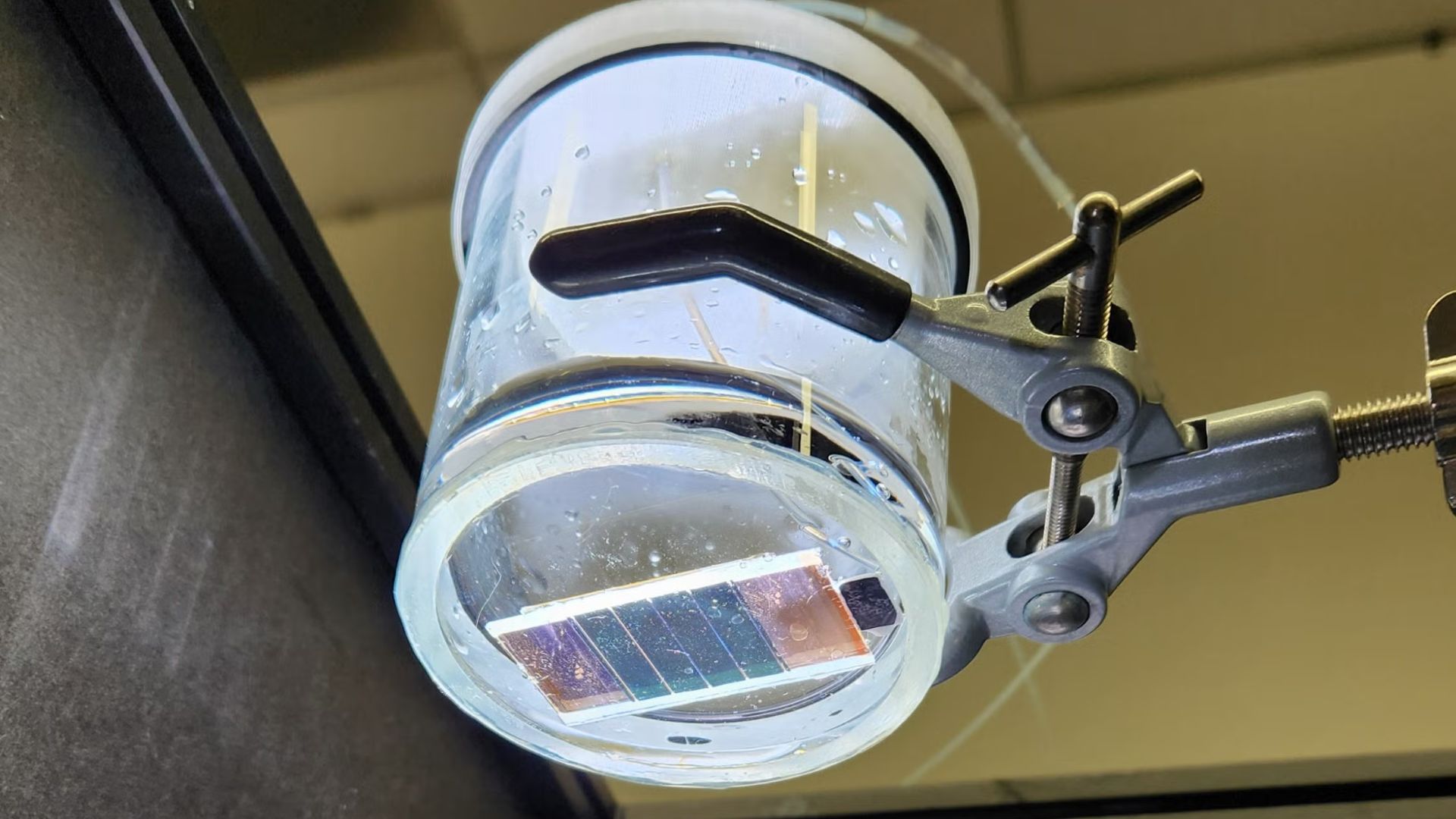

स्रोत रिपोर्ट में वर्णित प्रणाली में, इनपुट डेटा को विद्युत द्विआधारी मानों के रूप में एन्कोड नहीं किया जाता। इसके बजाय उसे डिवाइस में पहले से मौजूद गर्मी के आधार पर तापमानों के एक सेट के रूप में दर्शाया जाता है। यह तापीय जानकारी सूक्ष्म सिलिकॉन संरचनाओं से होकर गुजरती है, जिनकी ज्यामिति भौतिकी-आधारित अनुकूलन एल्गोरिदम द्वारा तय की जाती है। गर्मी का परिणामी वितरण और प्रवाह गणना करता है, जबकि आउटपुट दूसरे सिरे पर एकत्रित शक्ति के रूप में दर्शाया जाता है।

यह पारंपरिक तर्क का एक उल्लेखनीय उलट है। अधिकांश आधुनिक कंप्यूटिंग प्रणालियां विद्युत रूप से काम करती हैं और फिर उनके द्वारा उत्पन्न गर्मी से जूझती हैं। यह काम पूछता है कि क्या कुछ प्रकार की गणनाएं उस गर्मी पर ही सवार होकर की जा सकती हैं, जिससे विशिष्ट अनुप्रयोगों में अतिरिक्त ऊर्जा इनपुट की आवश्यकता संभावित रूप से कम हो सकती है।

शोधकर्ताओं ने मशीन लर्निंग में उपयोग होने वाला एक मूल ऑपरेशन प्रदर्शित किया

टीम ने सिलिकॉन संरचनाओं का उपयोग मैट्रिक्स-वेक्टर गुणन के एक सरल रूप को करने के लिए किया, जो बड़े भाषा मॉडल सहित मशीन-लर्निंग प्रणालियों के केंद्र में रहने वाला एक गणितीय ऑपरेशन है। स्रोत पाठ के अनुसार, कई मामलों में परिणाम 99 प्रतिशत से अधिक सटीक थे।

यह सटीकता उल्लेखनीय है, क्योंकि मैट्रिक्स ऑपरेशन वही दोहराए जाने वाला रैखिक बीजगणित है जो कई AI कार्यभारों पर हावी रहता है। सिद्धांततः, कोई भी नई विधि जो इन्हें कुशलता से कर सकती है, ध्यान आकर्षित करती है। लेकिन शोधकर्ता यह बताने में सावधान हैं कि उन्होंने क्या बनाया है।

स्रोत रिपोर्ट स्पष्ट करती है कि यह तकनीक आधुनिक डीप लर्निंग में उपयोग होने वाली विशाल प्रणालियों में बदलने के लिए अभी बहुत दूर है। इन तापीय संरचनाओं को लाखों की संख्या में साथ जोड़ना महत्वपूर्ण इंजीनियरिंग चुनौतियां पेश करेगा। सटीकता भी तब घटती है जब मैट्रिक्स अधिक जटिल हो जाते हैं और इनपुट तथा आउटपुट टर्मिनलों के बीच दूरी बढ़ती है।

इसलिए यह डिजिटल AI एक्सेलेरेटरों का निकट-भविष्य का विकल्प नहीं है। इसे बेहतर रूप से इस बात के प्रदर्शन के रूप में समझा जा सकता है कि सीमित परिस्थितियों में तापीय एनालॉग कंप्यूटेशन को वास्तविक और सटीक बनाया जा सकता है।

एनालॉग ऊष्मा-आधारित कंप्यूटिंग में रुचि क्यों है

इसकी अपील ऊर्जा तर्क में है। यदि कोई डिवाइस पहले से ही गर्मी पैदा कर रहा है, और यदि उस गर्मी का उपयोग सेंसिंग या सिग्नल-प्रोसेसिंग कार्यों के लिए किया जा सकता है, तो गणनात्मक भार का कुछ हिस्सा अतिरिक्त विद्युत कार्य की मांग करने के बजाय एक मौजूदा भौतिक उपोत्पाद पर स्थानांतरित किया जा सकता है।

यह एज डिवाइसों, एम्बेडेड इलेक्ट्रॉनिक्स, और उन प्रणालियों में महत्वपूर्ण हो सकता है जहां ताप प्रबंधन पहले से ही एक प्रमुख डिज़ाइन चिंता है। तापमान-संबंधी जानकारी मापने या संसाधित करने के लिए अतिरिक्त सर्किटरी जोड़ने के बजाय, एक चिप कुछ कार्यों के लिए गर्मी के प्रवाह को सीधे संचालन के आधार के रूप में उपयोग कर सकती है।

स्रोत रिपोर्ट एक विशेष रूप से तात्कालिक संभावना को रेखांकित करती है: तापीय सेंसिंग। शोधकर्ताओं का कहना है कि यह तकनीक समस्याग्रस्त ऊष्मा स्रोतों का पता लगाने और अतिरिक्त ऊर्जा खर्च किए बिना इलेक्ट्रॉनिक्स में तापमान परिवर्तन मापने में मदद कर सकती है। यह उन कई तापमान सेंसरों की आवश्यकता भी कम कर सकती है जो मूल्यवान चिप क्षेत्र घेरते हैं।

यह अधिक यथार्थवादी पहली अनुप्रयोग साबित हो सकता है। क्रांतिकारी कंप्यूटिंग प्रतिमान अक्सर अपनी शुरुआती उपयोगिता मुख्यधारा के प्रोसेसरों को बदलने में नहीं, बल्कि किसी संकरे और अधिक तात्कालिक समस्या को मौजूदा साधनों से बेहतर हल करने में पाते हैं।

इलेक्ट्रॉनिक्स के भीतर गर्मी पर एक अलग दृष्टि

आधुनिक चिप डिज़ाइन आमतौर पर गर्मी को एक इंजीनियरिंग बाधा मानता है। अत्यधिक गर्मी प्रदर्शन को घटाती है, घटकों का जीवन छोटा करती है, और शीतलन लागत लगाती है। इसलिए प्रमुख लक्ष्य उसे कम करना, हटाना, या नष्ट करना होता है।

यह शोध विपरीत दृष्टिकोण अपनाता है। स्रोत पाठ में उद्धृत प्रमुख लेखक Caio Silva कहते हैं कि गर्मी सामान्यतः इलेक्ट्रॉनिक गणना का अपशिष्ट उत्पाद होती है। यहां टीम गर्मी को स्वयं जानकारी के रूप में उपयोग करती है।

यह बदलाव वैचारिक रूप से महत्वपूर्ण है। यह सुझाव देता है कि उपकरणों के भीतर तापीय व्यवहार केवल प्रबंधन की समस्या नहीं, बल्कि आकार देने योग्य संसाधन भी है। सिलिकॉन संरचनाएं सामान्य चैनल नहीं हैं। वे अनुकूलित ज्यामितियां हैं जो इस तरह डिज़ाइन की गई हैं कि तापीय प्रवाह एक वांछित परिवर्तन लागू करे।

व्यवहार में, सामग्री का लेआउट गणना का हिस्सा बन जाता है। एक बार निर्मित होने पर, संरचना भौतिक रूप से यह नियंत्रित करती है कि गर्मी कैसे फैलती है, जिससे डिवाइस अपनी थर्मोडायनामिक्स के माध्यम से एक निर्धारित ऑपरेशन हल कर पाता है।

सीमाएं वास्तविक हैं, लेकिन अवसर भी वास्तविक है

कई प्रयोगात्मक कंप्यूटिंग विचार एक चतुर अवधारणा-सिद्धि और एक निर्मित, स्केलेबल प्लेटफॉर्म के बीच की खाई पर असफल हो जाते हैं। यह कार्य स्पष्ट रूप से उसी खाई का सामना करता है। स्रोत रिपोर्ट में स्केलिंग, जटिलता, और दूरी-जनित सटीकता हानि की समस्याओं का उल्लेख है। वे गौण विवरण नहीं हैं। वे प्रयोगशाला परिणाम और व्यावसायिक रूप से व्यवहार्य वास्तुकला के बीच का अंतर तय करते हैं।

फिर भी, इस शोध में कई ऐसे गुण हैं जो इसे देखने योग्य बनाते हैं। पहला, यह कम से कम कुछ मैट्रिक्स ऑपरेशनों में उच्च सटीकता दिखाता है। दूसरा, यह सूक्ष्म सिलिकॉन संरचनाओं पर आधारित है, यानी यह सेमीकंडक्टर दुनिया से पहले से परिचित सामग्रियों और निर्माण दृष्टिकोणों पर टिका है। तीसरा, यह एक बढ़ती हुई बाधा को लक्ष्य बनाता है: बढ़ती घनत्व वाली इलेक्ट्रॉनिक्स के तापीय व्यवहार को कैसे संवेदित किया जाए, कैसे प्रबंधित किया जाए, और संभवतः कैसे उपयोग में लाया जाए।

भले ही ऊष्मा-आधारित एनालॉग कंप्यूटिंग कभी एक सामान्य-उद्देश्य AI इंजन न बने, यह को-प्रोसेसरों, ऑन-चिप डायग्नोस्टिक्स, या विशिष्ट कम-ऊर्जा सिग्नल-प्रोसेसिंग कार्यों में भूमिका निभा सकती है।

व्यापक कंप्यूटिंग परिदृश्य में इसका महत्व क्यों है

इस कार्य का महत्व डिजिटल कंप्यूटिंग को बदलने से कम और यह विस्तार करने से अधिक है कि गणना किसे माना जाए। जैसे-जैसे AI और अन्य डेटा-गहन कार्यभार ऊर्जा मांग बढ़ाते हैं, शोधकर्ता दक्षता लाभों की तलाश में एनालॉग, फोटोनिक, न्यूरोमॉर्फिक और अन्य अपरंपरागत वास्तुकलाओं पर फिर से विचार कर रहे हैं।

यह MIT-नेतृत्व वाला प्रयास उसी प्रवृत्ति में बिल्कुल फिट बैठता है। यह प्रस्ताव करता है कि तापीय ऊर्जा, जिसे आमतौर पर हानि माना जाता है, आंशिक रूप से उपयोगी कार्य में बदली जा सकती है। एक ऐसे युग में जब चिप पर हर वाट मायने रखता है, यह विचार व्यावहारिक होने के साथ-साथ दार्शनिक आकर्षण भी रखता है।

स्रोत रिपोर्ट यह वादा नहीं करती कि निकट भविष्य में कोई ऐसा प्रोसेसर आएगा जो केवल अपशिष्ट ऊष्मा पर विशाल भाषा मॉडल चलाएगा, और इसे ऐसा नहीं पढ़ा जाना चाहिए। यह जो प्रस्तुत करती है, वह एक विश्वसनीय प्रमाण है कि ऊष्मा को एन्कोड, निर्देशित, और चयनित कार्यों में उच्च सटीकता के साथ सूचना के रूप में व्याख्यायित किया जा सकता है।

यह शोध की एक नई दिशा खोलने के लिए पर्याप्त हो सकता है। कंप्यूटिंग इतिहास ऐसे उदाहरणों से भरा है जहां एक शुरुआत में संकरी तकनीक इसलिए मूल्यवान बनी क्योंकि उसने किसी एक कठिन समस्या को असामान्य रूप से अच्छी तरह हल किया। अपशिष्ट-ऊष्मा कंप्यूटिंग भी उसी रास्ते पर चल सकती है। इसका पहला प्रभाव प्रोसेसर को बदलने से नहीं, बल्कि चिप की सबसे गर्म देनदारी को एक नए उपकरण में बदलने से आ सकता है।

यह लेख MIT Technology Review की रिपोर्टिंग पर आधारित है। मूल लेख पढ़ें.

Originally published on technologyreview.com