The Challenge of Visual Search at Scale

When you point your phone's camera at an object and ask Google what it is, the question looks simple from the user's perspective. Behind the scenes, the system faces a genuinely hard problem: visual queries are inherently ambiguous in ways that text queries are not. A photograph of a plant could be asking for identification, care instructions, toxicity information, where to buy it, or the species name — and the image itself provides no explicit signal about which answer the user wants.

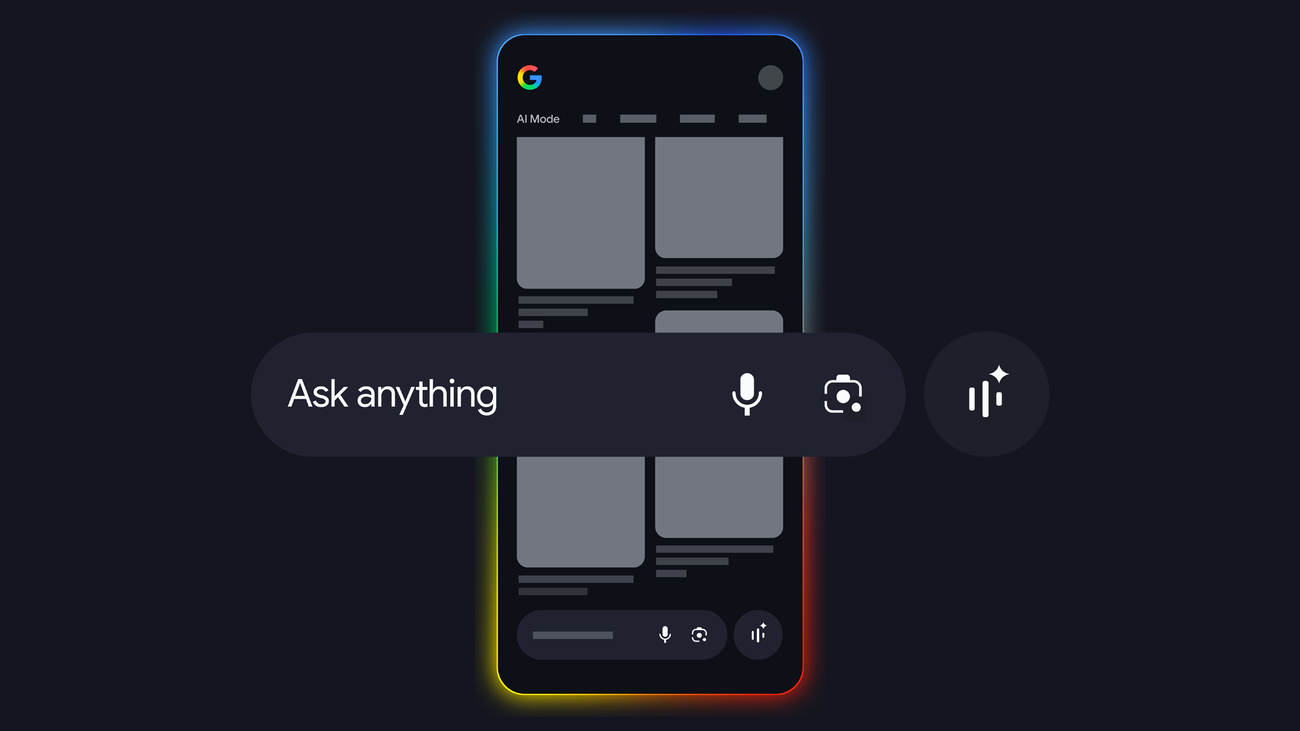

Google's approach to resolving that ambiguity is query fan-out, the technique that lies at the heart of AI Mode's visual search capabilities. Rather than treating a visual query as a single lookup, the system generates a family of related queries derived from the image, runs them simultaneously, and synthesizes the results into a response that anticipates the user's most likely needs.

How Query Fan-Out Works

The fan-out process begins with the AI system analyzing the image to extract salient features: the objects present, their relationships, any visible text, contextual clues about setting, and signals about the context in which the image was captured. From that analysis, the system generates multiple candidate queries — each representing a plausible interpretation of what the user might want to know.

For a photograph of a plant, the fan-out might generate parallel queries for species identification, common names, growing conditions, toxicity to pets and children, and where to purchase locally. These queries run simultaneously across Google's search index, with results from each stream evaluated for relevance and synthesized into a coherent response that addresses the most likely user intent while surfacing relevant information the user might not have thought to request explicitly.

Why It Matters for Users

The practical effect of query fan-out is that AI Mode's visual search behaves more like a knowledgeable assistant than a traditional search engine. A conventional image search returns visually similar documents. AI Mode with query fan-out returns answers to questions the user might ask about the subject of the image — a qualitatively different kind of response.

This distinction becomes most significant when users have limited vocabulary for what they're looking at. Someone trying to identify a mushroom, a skin condition, a car part, or a circuit board component may not know the terminology needed to construct an effective text query. Visual query fan-out sidesteps the vocabulary problem by inferring likely queries from image content, delivering useful information even when the user cannot articulate precisely what they're looking for.

Technical Challenges and Broader Applications

Query fan-out at scale introduces significant infrastructure demands. Running multiple parallel queries for every visual search request multiplies computational cost, requiring careful optimization to keep response latency acceptable. There is also a synthesis challenge: when parallel queries return diverse results, the language model must determine which are most relevant, how to weigh conflicting information, and how to present synthesized responses coherently without overwhelming users.

The fan-out architecture is also being applied to text queries in AI Mode, not just visual searches. The same principle — generating multiple related queries from a single user input and synthesizing the results — underpins AI Mode's ability to answer complex multi-part questions that a single search query could not adequately address. As Google continues to refine the system, query fan-out is likely to become more sophisticated, with the system learning from user behavior which fan-out strategies produce the most satisfying responses for different query types and contexts.

This article is based on reporting by Google AI Blog. Read the original article.