The Data Problem in Robotics

Teaching a robot to manipulate objects in the physical world has historically required enormous amounts of human-collected demonstration data. Google DeepMind's RT-1 system required 130,000 episodes of data collected over 17 months by human operators. The DROID dataset includes 76,000 teleoperated trajectories gathered across 13 research institutions — representing approximately 350 hours of human effort. These numbers reflect not just the scale of the challenge but the economic concentration it produces: only a small number of well-resourced laboratories can afford to collect the data needed to train competitive manipulation systems.

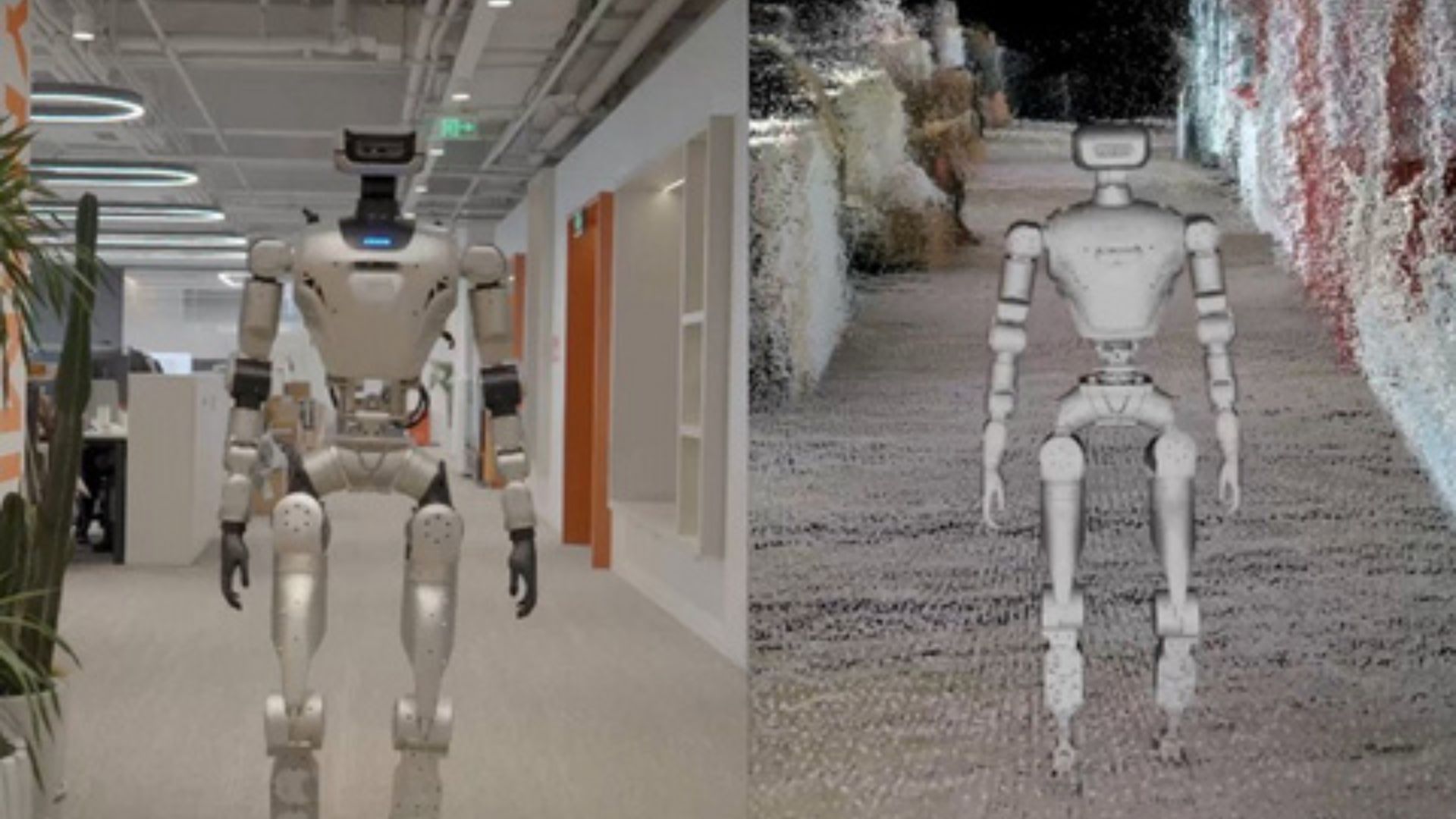

The Allen Institute for AI — Ai2 — is proposing a different model with MolmoBot, a robotic manipulation system trained primarily on data from virtual simulation rather than physical demonstrations. The research demonstrates that this simulation-trained model can transfer its capabilities to real physical robot systems, a result that could substantially democratize access to capable robotic manipulation AI.

Why Simulation Has Historically Failed to Transfer

The gap between simulation performance and real-world performance — the 'sim-to-real gap' — has been a persistent obstacle. Physical robots encounter a richness of sensory input, environmental variability, and contact dynamics that simulation environments struggle to replicate faithfully. A robot trained entirely in simulation often fails to handle the real-world messiness that its training environment abstracted away.

Previous attempts to bridge this gap have relied on domain randomization — deliberately varying simulation parameters like lighting, object textures, and physics properties to force robots to develop representations that generalize across conditions. This approach has achieved partial success in locomotion but has been less effective for dexterous manipulation tasks requiring fine motor control and precise contact force management.

MolmoBot's Approach

MolmoBot builds on Ai2's Molmo vision-language model, which provides the system with a rich understanding of visual scenes and language instructions. The key innovation is how simulation data is generated and curated for manipulation training. Rather than using a single simulation environment, the team developed a pipeline for generating diverse manipulation scenarios with sufficient physical fidelity to train generalizable skills.

The system combines improved simulation fidelity in contact dynamics with a representation learning approach that explicitly builds invariances to the visual differences between simulated and real environments. The robot learns to identify task-relevant visual features — the gripper position, the object being manipulated, the target location — that look similar across simulation and reality, rather than learning representations encoding simulation-specific visual artifacts.

The Democratization Argument

The economic argument for simulation-based training is straightforward. Generating simulation data requires compute infrastructure but not physical robots, not trained human operators, and not the institutional coordination needed to aggregate large demonstration datasets. A research team at a small university with access to a computing cluster can generate millions of simulated manipulation episodes in the time it would take a well-resourced laboratory to collect tens of thousands of physical demonstrations.

If simulation-trained models can match or approach the performance of physically trained systems — which MolmoBot's results suggest is achievable for a meaningful class of manipulation tasks — the capabilities of robotic manipulation AI become accessible to a much broader research community.

Open Release

Consistent with Ai2's research philosophy, the MolmoBot system and its simulation training pipeline are being released openly. The dataset of simulated manipulation trajectories, the trained model weights, and the simulation environment tools are all being made available to the research community — an approach that directly contrasts with the proprietary data and model strategies of commercial robotics AI programs that have led the field. CEO Ali Farhadi stated the goal explicitly: building AI that advances science through tools the global research community can build on together.

This article is based on reporting by AI News. Read the original article.