Claude va más allá del trabajo y la escuela

Anthropic está ampliando el papel de Claude, que pasa de ser un asistente centrado en la productividad a un operador digital más personal. La compañía ha expandido su directorio de servicios conectados para que el chatbot ahora pueda vincularse con apps de estilo de vida y consumo como AllTrails, Audible, Booking.com, Instacart, Intuit Credit Karma, Intuit TurboTax, Resy, Spotify, StubHub, Taskrabbit, Thumbtack, TripAdvisor, Uber, Uber Eats y Viator.

El movimiento es estratégicamente importante porque desplaza la historia de integración de Claude fuera de los entornos de trabajo y aula que han definido gran parte del despliegue de conectores de la empresa durante el último año. En lugar de ayudar sobre todo a los usuarios a recuperar información de herramientas profesionales, Claude se está posicionando para coordinar tareas entre servicios cotidianos para consumidores.

La propuesta de Anthropic es sencilla: cuanto más sistemas pueda ver e interactuar un asistente, más útil se vuelve. Un chatbot que puede recomendar una ruta de senderismo, estimar cuánto podría durar un viaje, preparar una lista de reproducción acorde y luego ayudar a organizar el transporte o la comida empieza a parecer menos una herramienta de preguntas y respuestas y más una capa de acción sobre múltiples apps.

La batalla por la utilidad del asistente

Anthropic no está sola en buscar ese resultado. La industria de la IA en general ha pasado el último año empujando más allá de las interfaces de chat aisladas y hacia sistemas que pueden llamar a herramientas externas, recuperar contexto específico de cuentas y completar tareas de varios pasos. Las integraciones de terceros son centrales en esa competencia porque hacen que los asistentes sean más difíciles de comparar solo por la calidad del modelo. Un asistente que puede actuar dentro de la vida digital de un usuario tiene una relevancia cotidiana mucho más fuerte.

Las nuevas integraciones de Claude reflejan ese cambio. Cubren viajes, comida, entretenimiento, finanzas, reservas, recados y servicios locales. Esa combinación importa porque amplía la variedad de escenarios prácticos en los que el asistente puede ser útil. Un usuario que planifica una escapada de fin de semana podría pasar de Booking.com y TripAdvisor a Uber y Resy. Alguien que organiza un día al aire libre podría usar AllTrails, Spotify y Uber Eats. Las aplicaciones no giran tanto en torno a una sola app como al potencial de flujos de trabajo conectados entre varias de ellas.

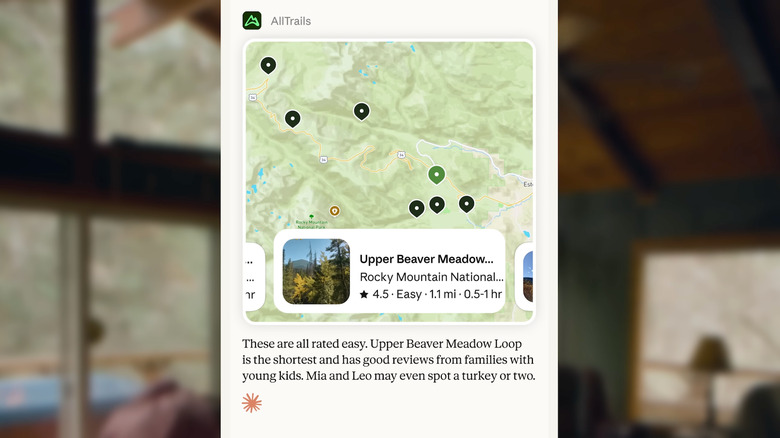

Anthropic ofreció un ejemplo en el informe original: Claude podría ayudar a planificar una caminata en AllTrails y luego abrir una lista de reproducción de Spotify lo bastante larga para la salida. El ejemplo es deliberadamente sencillo, pero señala el objetivo mayor de la compañía. El asistente está pensado para conectar servicios dentro de una sola conversación en lugar de obligar a los usuarios a saltar manualmente entre apps separadas.

Un modelo de interfaz distinto

Una parte destacable del anuncio no es solo qué apps son compatibles, sino cómo aparecen. Anthropic dice que está replanteando la presentación de los servicios conectados para que las apps relevantes se sugieran de forma dinámica dentro de la conversación. En otras palabras, Claude debería mostrar el servicio adecuado según la tarea en cuestión, en lugar de exigir que los usuarios naveguen por un conjunto estático de integraciones o cambien por sí mismos entre distintas herramientas.

Esa elección de interfaz importa. El futuro de la IA de consumo puede depender menos de si los asistentes pueden conectarse técnicamente a servicios y más de si esas conexiones se sienten intuitivas. Si los usuarios tienen que microgestionar la selección de apps, los permisos y las transferencias, la experiencia puede volverse rápidamente más engorrosa que abrir la app directamente. Las sugerencias dinámicas son el intento de Anthropic de reducir esa fricción y hacer que el asistente parezca más consciente del contexto.

Al mismo tiempo, la empresa dice que se espera que Claude consulte con los usuarios antes de realizar acciones como asegurar una reserva o hacer una compra. Ese paso de aprobación es esencial porque los asistentes de consumo operan mucho más cerca del dinero, la identidad y la preferencia personal que las herramientas de búsqueda empresarial. Una IA que reserve, pida o gaste sin la confirmación suficiente crearía un problema de confianza más rápido de lo que cualquier beneficio de conveniencia pudiera compensar.

El equilibrio de la IA de consumo: comodidad frente a control

La expansión pone de relieve un equilibrio central en la siguiente fase de los productos de IA. Una mayor utilidad depende de un acceso más profundo a cuentas, preferencias y rutas de transacción. Pero cada nueva conexión también eleva el riesgo en torno al consentimiento, la fiabilidad y la gestión de errores. Un fallo en un resumen de chat laboral es incómodo. Un error en una reserva, una compra, una consulta fiscal o una solicitud de transporte puede tener consecuencias inmediatas en el mundo real.

El énfasis de Anthropic en la confirmación del usuario sugiere que la compañía entiende que la automatización de consumo no puede simplemente imitar la lógica de velocidad primero del chat generativo. Tiene que estar mediada por una aprobación explícita y un diseño de interacción cuidadoso que haga legible la acción prevista del asistente antes de que ocurra nada. Eso es especialmente importante cuando las apps conectadas incluyen servicios financieros, reservas de viaje y plataformas de entrega.

El conjunto actualizado de integraciones de la compañía también muestra cómo el centro de gravedad de la IA se está moviendo con rapidez desde el rendimiento bruto del modelo hacia la orquestación del producto. La cuestión ya no es solo si un modelo puede generar una respuesta coherente. Es si el asistente puede coordinar herramientas, cuentas y servicios de una manera que resulte realmente útil sin volverse invasiva o impredecible.

Por qué importa esta expansión

Para Anthropic, el impulso al consumo amplía el alcance de Claude en un momento en que las empresas de IA compiten por definir qué es realmente un asistente. Si el chatbot sigue siendo sobre todo un cuadro de texto para redactar e investigar, competirá intensamente en puntos de referencia de inteligencia. Si se convierte en un sistema capaz de coordinar actividades diarias entre una amplia gama de apps, entonces competirá en diseño de ecosistema, confianza y ejecución.

Ese es un problema de producto más difícil, pero también potencialmente más defendible. Los usuarios pueden cambiar de modelo para escribir o hacer lluvia de ideas. Es menos probable que cambien con facilidad una vez que un asistente está integrado en sus calendarios, reservas, decisiones de ocio, recados y rutinas de viaje. El último despliegue de Anthropic no es solo una actualización de integraciones. Es una apuesta por hacer que Claude esté más incrustado en las decisiones ordinarias que llenan el día.

Que funcione dependerá de qué tan bien equilibre la experiencia la iniciativa con la contención. El atractivo de un asistente personal es que elimina fricción. El riesgo es que añada una nueva capa de abstracción entre los usuarios y las apps en las que ya confían. Anthropic apuesta a que la coordinación conversacional, respaldada por confirmaciones selectivas, puede ser el puente entre esas dos realidades.

Este artículo se basa en la cobertura de Engadget. Leer el artículo original.

Originally published on engadget.com