Google ينقل المزيد من أعمال الذكاء الاصطناعي إلى الجهاز نفسه

يشير أحدث إصدار Gemma 4 من Google إلى دفعة أكثر طموحًا نحو الذكاء الاصطناعي المحلي الذي يعمل مباشرة على الهواتف والأجهزة الأخرى بدلًا من الاعتماد على السحابة. ووفقًا لـ The Decoder، يمكن لعائلة النماذج المفتوحة المصدر الجديدة معالجة النصوص والصور والصوت بالكامل على الجهاز، كما يمكنها استخدام الأدوات عبر “agent skills” مدمجة مثل الوصول إلى Wikipedia والخرائط التفاعلية وإنشاء رموز QR.

هذه التركيبة مهمة لأنها تغيّر المعنى العملي للذكاء الاصطناعي على الهاتف. كثير من الأنظمة الموجهة للمستهلكين تقدم نفسها كمساعدين، لكن المعالجة الأساسية فيها ما تزال غالبًا تعتمد على خوادم بعيدة. أما Gemma 4 فموضعه مختلف. الجاذبية ليست في السرعة أو السهولة فقط؛ بل في القدرة على إبقاء البيانات على الجهاز مع تمكين نطاق أوسع من الإجراءات في الوقت نفسه.

يتوافق هذا التوقيت مع اتجاه أوسع في الصناعة. مع ازدياد كفاءة النماذج وتحسن شرائح الهواتف، تحاول الشركات نقل المزيد من الذكاء إلى عتاد الحافة. ذلك يمكن أن يقلل زمن الاستجابة، ويخفض تكاليف الخوادم، ويجعل ادعاءات الخصوصية أكثر إقناعًا. وتحاول Google الآن تحويل هذا الاتجاه التقني إلى منصة للمطورين وقناة توزيع موجهة للمستهلكين في الوقت نفسه.

النماذج الأصغر تستهدف الهواتف الذكية الشائعة

يقول The Decoder إن Gemma 4 يأتي في أربع نُسخ. اثنتان منها، E2B وE4B، مصممتان خصيصًا للهواتف الذكية. يشير حرف “E” إلى المعلمات الفعالة، أي المعلمات النشطة أثناء الاستدلال. وبعد التكميم، يشغل نموذج E2B نحو 1.3 جيجابايت على الجهاز، بينما يحتاج E4B إلى نحو 2.5 جيجابايت.

هذه الأحجام لافتة لأنها تشير إلى استراتيجية نشر عملية بدلًا من نموذج استعراضي مخصص فقط للعتاد الفاخر. ويذكر التقرير أن E2B وE4B يمكنهما العمل على هواتف بذاكرة RAM سعتها 6 جيجابايت و8 جيجابايت على التوالي. وإذا ثبت ذلك في الاستخدام اليومي، فسيوسّع قاعدة الأجهزة القابلة للاستهداف بشكل كبير، ويجعل الذكاء الاصطناعي متعدد الوسائط المحلي أقل اعتمادًا على الأجهزة الرائدة.

وتقول Google أيضًا إن نسخ الهواتف تعمل بسرعة تصل إلى أربعة أضعاف الجيل السابق، مع تقليل استنزاف البطارية بما يصل إلى 60 بالمئة. وتُظهر اختبارات Arm المعيارية الخاصة بها، كما نقل The Decoder، مكاسب معالجة أكبر على شرائح Arm الأحدث. ستختلف التجربة الفعلية بحسب الجهاز، لكن الرسالة واضحة: هندسة النموذج وتحسين العتاد أصبحا يوازيان الحجم الخام في الأهمية.

القصة الأكبر هي القدرة الوكيلة من دون السحابة

ما يميز هذا الإصدار عن مجرد تحديث للكفاءة هو التركيز على استخدام الأدوات. لا يُوصف Gemma 4 باعتباره مجرد نموذج متعدد الوسائط صغير الحجم. بل يُطرح كنظام وكيل قادر على استدعاء أدوات محددة تلقائيًا عبر مهارات مدمجة. عمليًا، هذا يعني أن النموذج العامل محليًا يمكنه أن يفعل أكثر من الإجابة عن الأسئلة من خلال prompt؛ إذ يمكنه جلب المعلومات، والعمل مع الخرائط، أو إنشاء مخرجات مفيدة من دون إرسال التفاعل إلى خدمة بعيدة.

لهذه البنية آثار استراتيجية. فالوكلاء على الجهاز يعدون بتوازن مختلف بين الوظائف والخصوصية. إذا بقي النموذج ومدخلات المستخدم وتنسيق الأدوات كلها على عتاد يسيطر عليه المستخدم، فبإمكان الشركات تقديم تجربة ذكاء اصطناعي أكثر خصوصية مع دعم سير عمل أغنى في الوقت نفسه.

كما يفتح ذلك بابًا للتخصيص. ويذكر The Decoder أن المطورين يمكنهم إنشاء مهارات مخصصة ومشاركتها عبر GitHub. وهذا يشير إلى أن Google لا تطرح عائلة نماذج فقط، بل تحاول أيضًا بناء منظومة حول سلوكيات ذكاء اصطناعي محلية وقابلة للنقل.

Google يقرن الإطلاق المفتوح بالتوزيع الواسع

يصدر Gemma 4 تحت رخصة Apache 2.0، والتي يصفها The Decoder بأنها مناسبة تجاريًا. وهذا مهم لأن الترخيص قد يحدد ما إذا كانت عائلة نماذج ستصبح قاعدة تطوير جادة أم ستبقى إلى حد كبير مجرد فضول بحثي. فالرخصة المتساهلة تقلل العوائق أمام التجربة والتكييف والنشر التجاري.

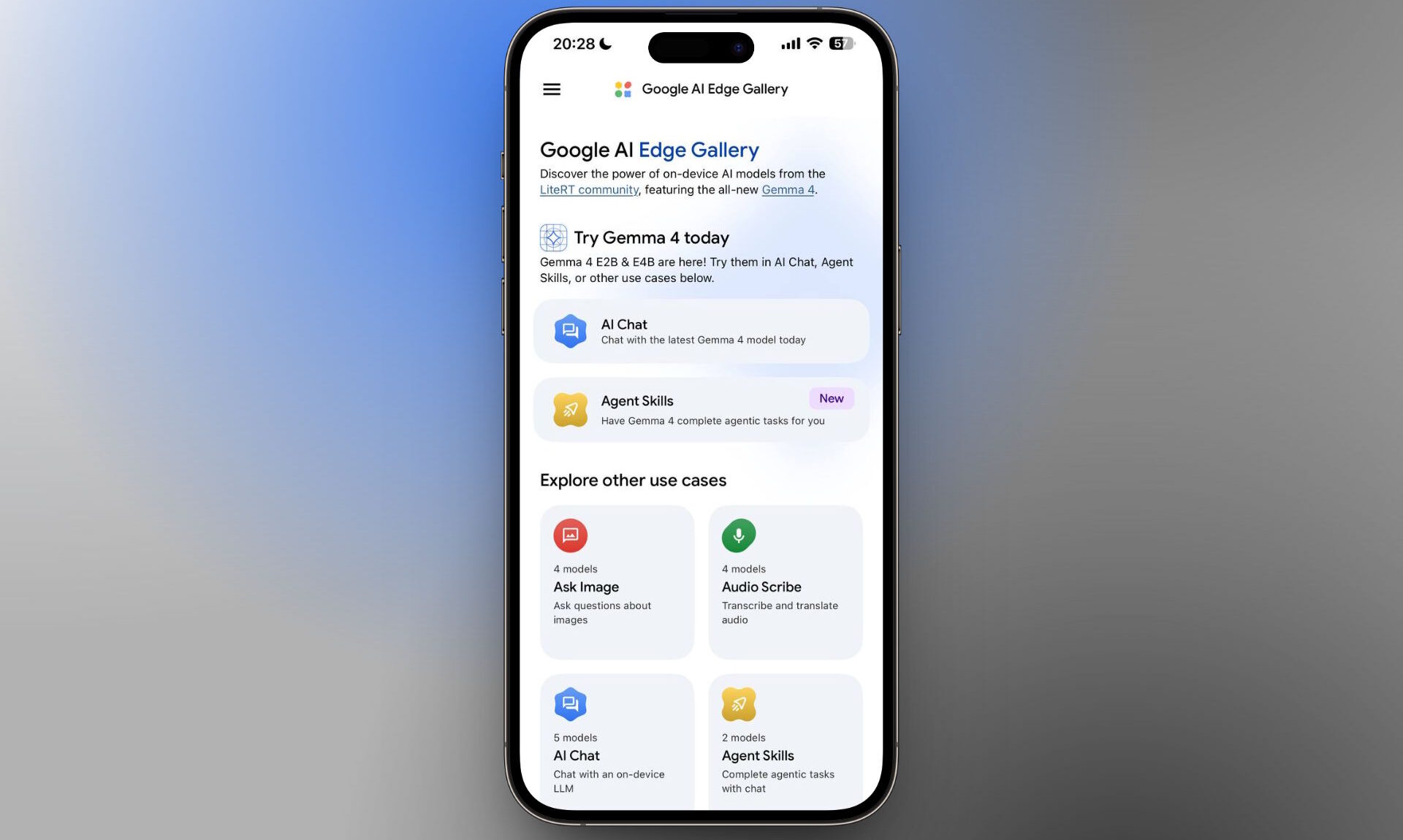

كما توزع Google التجربة عبر تطبيق Google AI Edge Gallery المجاني على Android وiOS. ويقول The Decoder إنه منذ إطلاق Gemma 4 صعد التطبيق إلى المرتبة الرابعة بين تطبيقات الإنتاجية المجانية الأكثر تنزيلًا في متجر Apple App Store على iOS، خلف Claude وGemini وChatGPT. وحتى إذا تقلبت التصنيفات، فإن هذه الإشارة توضح وجود قدر ملموس من فضول المستخدمين المبكر تجاه تجارب الذكاء الاصطناعي المحلية.

ويضيف التقرير أن Gemma 4 يعتمد على نفس القاعدة البحثية التي يعتمد عليها نموذج Gemini 3 المملوك من Google، وأن نسخ الهواتف الذكية ستشكّل الأساس لـ Gemini Nano 4 على Android. وهذه الصلة مهمة، لأنها تعني أن Google تتعامل مع خطوط النماذج المفتوحة والمملوكة باعتبارها جزءًا من المكدس الأكبر نفسه، مع عمل Gemma كمنصة للمطورين وكحقل اختبار للنشر المحمول في آن واحد.

لماذا يهم هذا الإصدار في المنافسة على منصات الذكاء الاصطناعي

يتجه سوق الذكاء الاصطناعي بشكل متزايد إلى عدة منافسات متداخلة: نماذج السحابة المتقدمة، والنشر المؤسسي، ومنظومات المطورين، والآن الذكاء الأصلي في الجهاز. يمنح Gemma 4 Google موقعًا أقوى في الفئتين الأخيرتين. ومن خلال الجمع بين الأوزان المفتوحة، والتحسين للهاتف المحمول، واستخدام الأدوات، وتطبيق للمستهلكين، تحاول الشركة جعل الذكاء الاصطناعي المحلي أكثر وضوحًا لكل من المطورين والمستخدمين النهائيين.

وتعكس هذه الخطوة أيضًا ضرورة تنافسية. فإذا كان الذكاء الاصطناعي سيصبح طبقة افتراضية عبر الهواتف والأجهزة الشخصية الأخرى، فستحصل الشركات التي تسيطر على النماذج المحلية الفعالة وتجربة المطور المحيطة بها على أفضلية مهمة. سيبقى الوصول إلى السحابة أساسيًا لأعباء العمل الأكبر، لكن ليس كل تفاعل يحتاج إلى استجابة بحجم مركز بيانات.

لذلك يشير Gemma 4 إلى مستقبل أكثر هجينًا. ستبقى بعض مهام الذكاء الاصطناعي عن بُعد لأنها تتطلب نماذج أكبر أو قدرة حوسبة أوسع. بينما ستعمل مهام أخرى بشكل متزايد حيث يوجد المستخدم أصلًا: على الهاتف، داخل نظام التشغيل، وبالقرب من البيانات الشخصية الحساسة.

وبالنسبة إلى Google، فإن هذا الإصدار هو محاولة لتشكيل ذلك المستقبل مبكرًا. أما بالنسبة إلى المطورين، فهو يقدم أساسًا محليًا أكثر عملية. وبالنسبة إلى المستخدمين، فهو يوحي بأن عبارة “الذكاء الاصطناعي على هاتفك” قد تعني قريبًا شيئًا أكثر حرفية من مجرد اختصار يحمل علامة تجارية إلى السحابة.

تعتمد هذه المقالة على تغطية The Decoder. اقرأ المقال الأصلي.

Originally published on the-decoder.com