The Incident

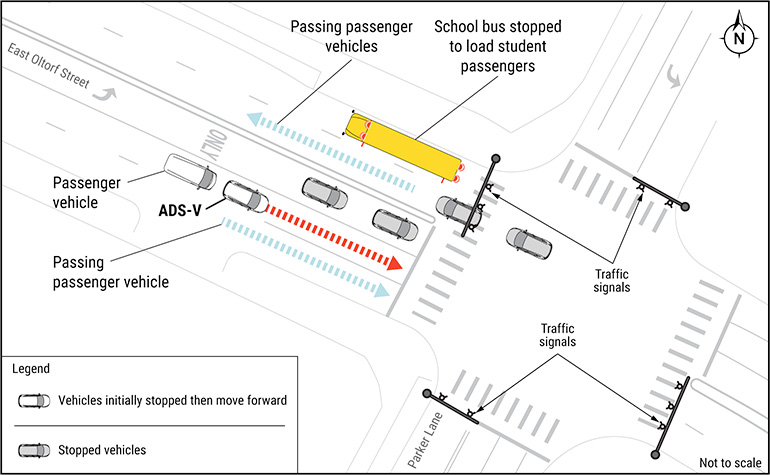

A Waymo robotaxi illegally passed a stopped school bus in Austin, Texas, in an incident that has prompted a federal investigation and reignited debate about the safety protocols governing autonomous vehicles operating near schools and children. The self-driving vehicle failed to yield to the bus's extended stop sign and flashing red lights, a violation that carries serious legal and safety implications in every US state.

According to Waymo and investigators, the failure was not caused by a malfunction in the vehicle's autonomous driving system but rather by an error made by a remote human operator. Waymo's vehicles are monitored by remote operators who can intervene in the car's decision-making when the autonomous system encounters situations it cannot resolve on its own. In this case, the remote operator made an incorrect judgment that allowed the vehicle to proceed past the stopped bus.

Remote Operator Error Under Scrutiny

The revelation that a human error, rather than a software bug, caused the violation raises important questions about the role of remote operators in autonomous vehicle systems. These operators serve as a safety net for situations where AI decision-making falls short, but the Austin incident demonstrates that human intervention can also introduce new failure modes into the system.

Remote operators typically monitor multiple vehicles simultaneously, making split-second decisions based on video feeds and sensor data transmitted from the vehicles. The cognitive demands of this work are significant, and the potential for errors grows as the number of vehicles under supervision increases. Critics have long warned that remote operation at scale could prove challenging, particularly in complex urban environments where the stakes of a wrong decision are high.

Waymo has acknowledged the incident and stated that it has implemented changes to prevent similar errors in the future. The company says it has updated its remote operator protocols and added specific safeguards related to school bus scenarios. However, the details of these changes have not been made fully public.

Federal Investigation Launched

The National Highway Traffic Safety Administration has opened an investigation into the incident, examining both the specific circumstances of the Austin violation and the broader question of how autonomous vehicle companies manage remote operator oversight. The probe could result in new regulatory requirements for how remote operators are trained, supervised, and deployed.

NHTSA has been increasingly active in regulating the autonomous vehicle industry, having opened multiple investigations into various companies' self-driving systems over the past several years. The agency has the authority to issue recalls, mandate safety improvements, and establish new federal motor vehicle safety standards if it determines that existing practices are inadequate.

The Austin incident is particularly sensitive because it involves the safety of children. School bus stop laws exist specifically to protect students who are boarding or exiting buses and may need to cross the street. Violations of these laws by human drivers already result in thousands of dangerous incidents annually, and the expectation for autonomous vehicles is that they should perform better than human drivers in these scenarios.

Waymo's Safety Record

Waymo has emphasized that its overall safety record in Austin and other operating cities remains strong, with its autonomous vehicles having completed millions of miles of driverless operation with a lower rate of reportable incidents than human drivers. The company points to peer-reviewed research showing that its vehicles are involved in fewer crashes per mile than the average human-driven vehicle.

However, critics argue that aggregate safety statistics do not address the specific risks posed by failure modes unique to autonomous systems, such as remote operator errors or sensor limitations in unusual conditions. A single high-profile incident involving a child's safety can undermine public trust in autonomous vehicles far more than favorable statistical comparisons might suggest.

Implications for the Industry

The incident comes at a sensitive time for the autonomous vehicle industry, which has been expanding rapidly into new cities while seeking to maintain public and regulatory confidence. Waymo currently operates commercial robotaxi services in multiple US cities and has been aggressively pursuing expansion into additional markets.

Other autonomous vehicle companies are likely watching the investigation closely, as its outcomes could set precedents that affect the entire industry. The question of how to properly staff, train, and oversee remote operators is one that every company deploying autonomous vehicles at scale must answer, and the Austin incident has brought that challenge into sharp focus for regulators and the public alike.

This article is based on reporting by The Robot Report. Read the original article.

Originally published on therobotreport.com