The Number Format Revolution

Artificial intelligence has driven an unprecedented explosion in the design of new number formats — the ways in which numbers are digitally represented in computer hardware. The push to train and run ever-larger neural networks at lower cost has led engineers to explore every possible way to reduce the number of bits used to represent data, shaving computation time and energy consumption in the process. Formats like Google's bfloat16, NVIDIA's TensorFloat-32, and various 8-bit and even 4-bit representations have become standard tools in the AI engineer's arsenal.

These reduced-precision formats work for AI because neural networks are remarkably tolerant of numerical imprecision. A slight rounding error in one neuron's activation value is absorbed by the statistical averaging that occurs across millions of parameters. Training might take marginally more iterations to converge, but the speed gained from processing smaller numbers vastly outweighs the cost of slightly noisier computations.

The success of these AI-optimized formats has created a natural temptation: if reduced precision works for neural networks, why not apply the same approach to scientific computing? The answer, as Laslo Hunhold explains in a detailed IEEE Spectrum interview, is that the math does not transfer.

Why Scientific Computing Is Different

Scientific computing encompasses computational physics, fluid dynamics, structural engineering simulations, climate modeling, molecular dynamics, and dozens of other fields where computers solve systems of equations that describe physical phenomena. These simulations differ from neural network computations in a fundamental way: they require numerical accuracy, not just statistical correlation.

When a physicist simulates the turbulent flow of air over a wing, each computational cell must accurately represent pressure, velocity, and temperature values that interact with neighboring cells through well-defined physical laws. A small numerical error in one cell does not average out — it propagates through the simulation, potentially growing exponentially through a phenomenon called numerical instability. What starts as an imperceptible rounding error can cascade into a simulation that produces physically meaningless results.

This sensitivity to precision is not a failure of the simulation software. It reflects the mathematical nature of the partial differential equations being solved. Many of these equations are inherently chaotic, meaning that small perturbations in initial conditions or intermediate calculations lead to dramatically different outcomes. The entire discipline of numerical analysis exists to understand and control these errors, and decades of research have established that certain minimum precision requirements must be met for simulations to produce trustworthy results.

The Bespoke Format Challenge

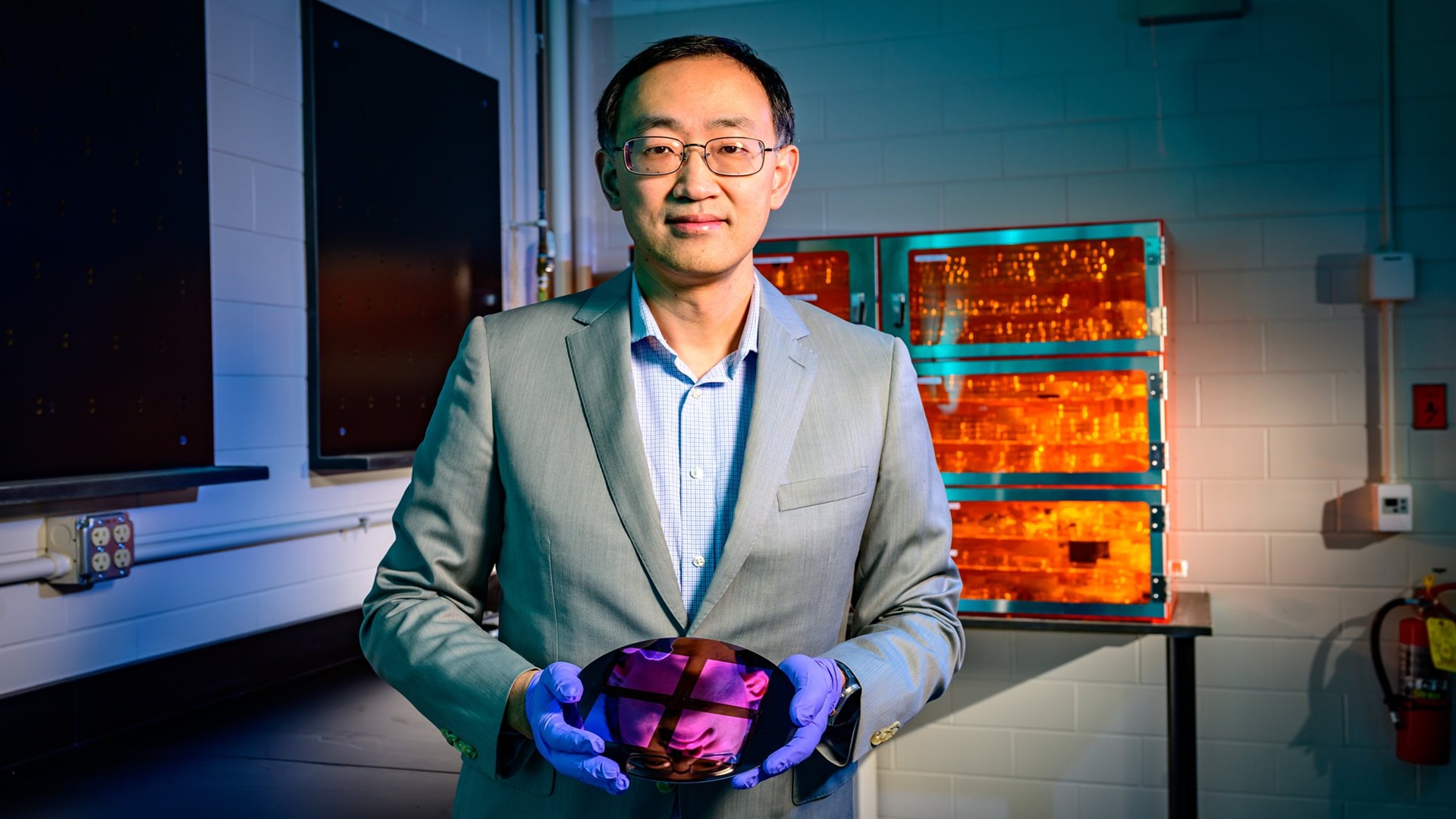

Hunhold, who recently joined Barcelona-based Openchip as an AI engineer, has been working to develop number formats specifically designed for scientific computing — not borrowed from AI. His approach recognizes that the precision requirements of scientific simulations are qualitatively different from those of neural networks, and that simply applying AI formats to scientific problems is not a viable shortcut.

The challenge is multifaceted. Scientific computing requires higher precision in certain parts of the number range and can tolerate lower precision in others. The distribution of values in a physics simulation looks nothing like the distribution of activations in a neural network. A format optimized for one application may be actively harmful for the other.

- AI number formats reduce precision to speed computation, relying on neural networks' tolerance for rounding errors

- Scientific simulations require numerical accuracy — small errors can cascade catastrophically

- Reduced-precision AI formats can produce physically meaningless results in engineering simulations

- Researchers are developing bespoke number formats designed specifically for scientific computing

- The value distributions in physics simulations differ fundamentally from neural network activations

The Hardware Dimension

The issue extends beyond software into hardware design. Modern AI accelerators — GPUs and custom chips from NVIDIA, Google, AMD, and startups — are increasingly optimized for the specific number formats used in machine learning. Their arithmetic units are designed to process bfloat16, FP8, and other AI-native formats at maximum throughput, while traditional double-precision floating-point performance has stagnated or even declined in relative terms.

This hardware trend creates a practical problem for scientific computing. If chip manufacturers continue to prioritize AI-specific formats, scientists and engineers may find that the latest and most powerful computing hardware is poorly suited to their workloads. A chip that can perform trillions of low-precision AI operations per second may struggle with the double-precision arithmetic that a climate model or structural analysis requires.

Hunhold's work on bespoke scientific formats is partly motivated by this hardware reality. If scientific computing can identify number formats that achieve acceptable accuracy with fewer bits than traditional double-precision, those formats could be implemented in future hardware alongside AI formats, ensuring that scientific workloads benefit from the same manufacturing advances that are driving AI chip performance.

The Stakes of Getting It Wrong

The consequences of applying inadequate numerical precision to scientific computing are not abstract. Engineering simulations inform the design of aircraft structures, nuclear reactor containment systems, bridge loadings, and pharmaceutical molecular interactions. A simulation that returns a plausible-looking but numerically incorrect result could lead to designs that fail catastrophically in the real world.

The AI industry's remarkable success with reduced-precision computing is a genuine achievement, but it comes with a domain-specific caveat that the scientific computing community is keen to emphasize: what works for pattern recognition does not automatically work for physics. The numbers have to be right, and right means something very different when lives depend on the simulation's accuracy.

This article is based on reporting by IEEE Spectrum. Read the original article.

Originally published on spectrum.ieee.org