Android is becoming a more active AI platform

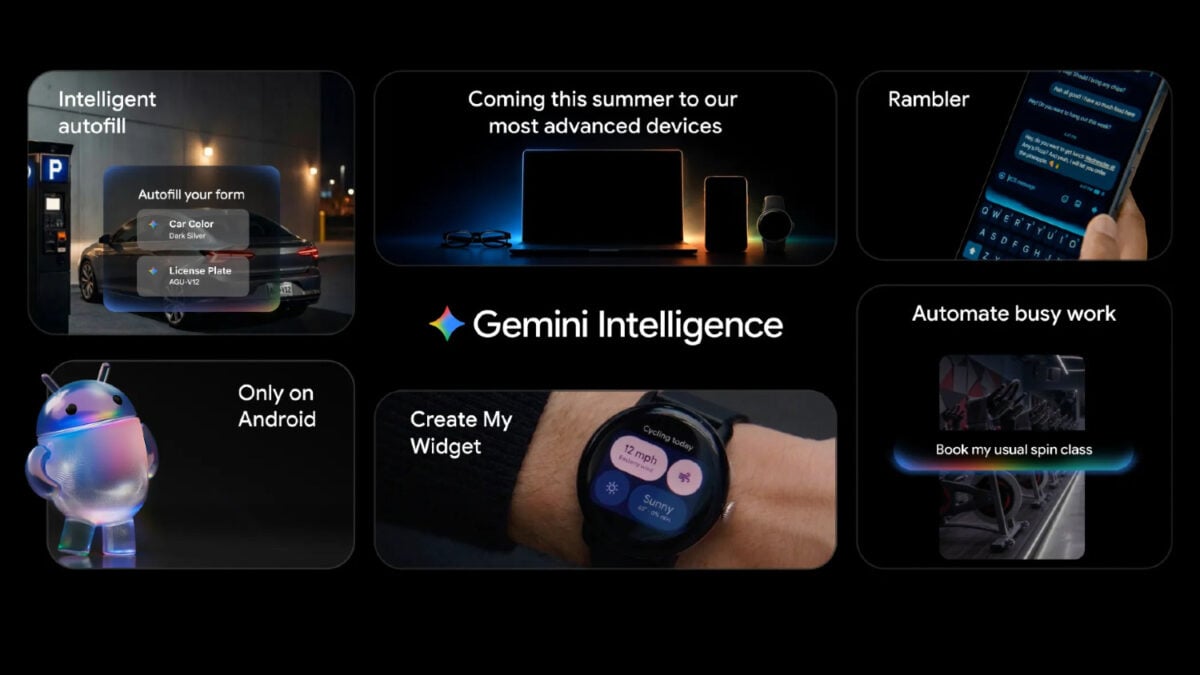

Google is preparing a wider Android push for Gemini, moving beyond a standalone chatbot and deeper into the operating system itself. Ahead of its Google I/O developer conference, the company announced Gemini Intelligence, a suite of AI-powered features designed to bring Gemini more directly into day-to-day mobile use.

Google’s framing is explicit: the goal is not just to answer prompts, but to help users get things done proactively across apps and services. The company says Gemini Intelligence will bring what it calls the best of Gemini to its most advanced devices through a mix of premium hardware and software.

That language matters because it points to a familiar trend in consumer AI. The competition is shifting from who has a chatbot to who can embed AI into routine actions. Android, in Google’s telling, is becoming a staging ground for that shift.

From assistant to operator

The most consequential feature set is task automation. Google described Gemini Intelligence as capable of handling activities that span multiple apps, a step toward the agentic model many large platform companies have been signaling for months.

The examples Google chose are practical rather than flashy. The system could help users snag a front-row bike for a spin class, find a class syllabus inside Gmail, and then place required books into a shopping cart. It can also take an image of a grocery list and populate an Instacart cart with the corresponding items.

Those examples suggest Google is betting that convenience, not conversation, will be the most persuasive consumer argument for AI on phones. If the feature works reliably, it reduces the number of taps and app switches needed to move from intention to action. If it works inconsistently, it risks turning ordinary errands into opaque automation failures.

The strategic point is clear either way. Google wants Gemini to act within software, not just talk about it.

Rambler and the next phase of voice input

Another highlighted feature is Rambler, a speech-to-text tool that is designed to account for natural speech patterns, including filler words and repetition. Rather than requiring users to dictate cleanly, Google says Rambler can identify the important parts of what was said and shape them into a concise message.

That may sound incremental, but it addresses a real weakness in voice interfaces. Many people do not speak in neat, punctuation-ready sentences. They revise themselves in real time, repeat phrases, and wander before landing on the point. A system that can cleanly distill that kind of speech could make voice input more useful in messaging and note-taking contexts.

Google also says Rambler can switch between different languages within the same message. That feature reflects how multilingual communication often works in practice, especially in regions where code-switching is ordinary rather than exceptional.

The company added a privacy signal as well, saying Rambler clearly indicates when it is enabled and that audio is used only for real-time transcription and is not stored or saved.

Personal data, opt-in controls, and browser reach

Google is also extending AI-powered autofill through Gemini Intelligence. The system can fill forms and text fields on a user’s behalf by drawing on Personal Intelligence, an opt-in layer that gives Gemini access to information such as YouTube history and Google search records.

This is likely to become one of the most contested parts of the rollout. On one hand, autofill becomes more useful when it knows context about the user. On the other hand, the same contextual depth raises predictable concerns about how much a personal assistant should know and how comfortably users will hand over that data. Google’s answer is to emphasize that the feature is strictly opt-in.

Gemini is also heading deeper into Chrome for Android. Google says the assistant will be able to summarize and compare content across the web, similar to desktop experiences, and will gain access to an auto browse capability that can automate browser-based tasks such as booking an appointment.

That extends the same operating logic from apps into the web: AI is being positioned as an action layer between users and interfaces that were originally built for manual navigation.

A staged rollout with broader implications

Google says the new features will arrive in waves, starting with the latest Samsung Galaxy and Google Pixel phones. It also said some Chrome-related capabilities are expected in June.

The staged rollout underscores an important reality in the current AI market. Even when companies describe software advances in universal terms, they often launch first as premium-device features. That can help control performance expectations and hardware demands, but it also means the next phase of smartphone AI may initially be available to a narrower slice of users.

Still, the announcement marks a clear directional change. Gemini is no longer being presented primarily as an optional assistant sitting beside Android. It is being woven into core mobile behaviors: typing, browsing, shopping, scheduling, and app-to-app coordination.

Whether users embrace that depends on two unresolved questions. The first is reliability. Agentic claims are compelling only when the system can complete tasks accurately and predictably. The second is trust. The more capable these systems become, the more they depend on access to personal context, and the more users must decide whether the trade is worth it.

Google has chosen to push forward on both fronts at once. That makes Gemini Intelligence one of the more important Android changes to watch, not because it proves mobile AI is finished, but because it shows how aggressively platform companies now want AI to disappear into the operating system and start acting on behalf of the user.

This article is based on reporting by Gizmodo. Read the original article.

Originally published on gizmodo.com