A Line That Has Long Been Held

Among the many debates about where artificial intelligence should and should not be deployed, few touch on higher stakes than nuclear weapons. For decades, the question of who has authority over nuclear launch decisions has been governed by strict protocols designed to ensure human judgment remains central to the most consequential choices in human history. Now, a faction within defense and technology circles is arguing that AI should play a more direct role in nuclear command and control.

The argument for AI integration in nuclear systems typically takes several forms. Proponents claim that AI could help with threat detection, reducing the risk of false alarms caused by sensor errors or adversary deception. They argue that in a compressed decision timeline — modern hypersonic missiles can reach targets in minutes — AI might be necessary to process information faster than human operators. Some go further, suggesting AI could help manage escalation dynamics more rationally than human decision-makers under extreme stress.

The Case Against

Critics are numerous and include some of the most respected voices in arms control, AI safety, and military ethics. Their objections fall into several categories.

First, reliability. AI systems make errors in ways that are often unpredictable and difficult to detect in advance. A false positive — an AI system incorrectly identifying an incoming attack — in a nuclear context could be catastrophic. Unlike most domains where AI errors are recoverable, a nuclear launch based on faulty AI reasoning is not.

Second, adversarial vulnerability. AI systems can be deceived or exploited through adversarial inputs in ways that human operators are less susceptible to. An adversary that understood the architecture of a nuclear-adjacent AI system could potentially craft situations designed to trigger automated responses, bypassing human judgment at the critical moment.

Third, accountability. Nuclear weapons exist within a framework of legal and political accountability. When a human commander makes a launch decision, there is a chain of responsibility. An AI system that participates in that decision creates profound ambiguities that existing international law and arms control treaties are not equipped to handle.

The Current State of AI in Military Systems

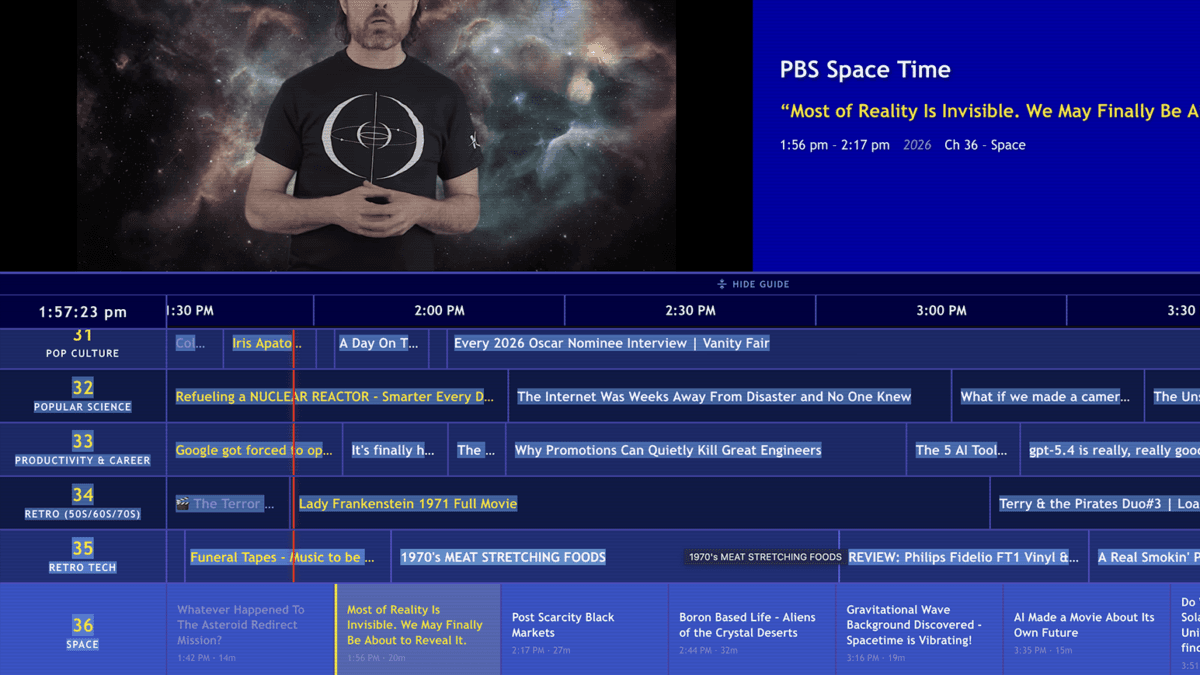

The United States' Project Maven uses computer vision AI to analyze drone footage and identify targets — a capability in operational use for years. More recently, generative AI including OpenAI's models and Anthropic's Claude has been integrated into classified military settings. But there is, at least officially, a firm line between AI as an analytical assistant and AI as a decision-maker in lethal systems.

The question of where that line sits, how it is enforced, and whether it is being eroded in practice is one of the most consequential technology policy questions of the current decade.

International Dimensions

The United States is not the only country navigating these questions. Russia, China, and other nuclear-armed states are all investing in AI for military applications. There are legitimate concerns about a race dynamic: if one state believes another is moving toward AI-assisted nuclear decision-making, the pressure to do the same increases regardless of the risks involved. Efforts to establish international norms around AI in nuclear systems have made limited progress, with the Cold War arms control architecture largely eroded and no new frameworks to replace it.

This article is based on reporting by Gizmodo. Read the original article.