A network view of language is gaining ground

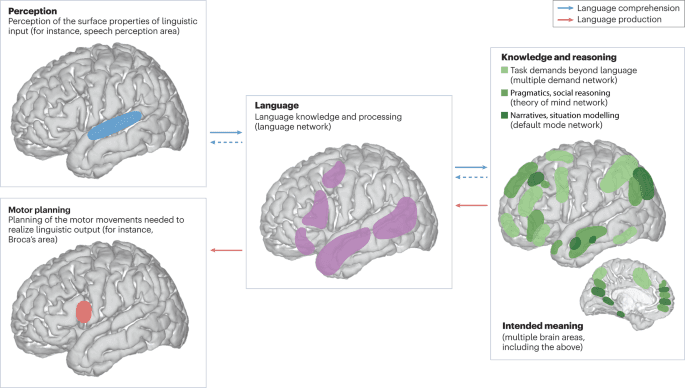

New research from UTHealth Houston argues that language processing is not the work of one isolated brain region. Instead, understanding language appears to depend on rapid cross-talk among multiple regions that exchange information in real time. The finding challenges a longstanding simplified picture in which one dominant area handled the core work of comprehension.

That does not mean classic language regions suddenly stop mattering. It means the brain may be better understood as a distributed system in which several areas contribute to the act of making sense of words, phrases, and meaning. According to the supplied report, the study found that these regions engage in fast-moving conversations while language is being processed.

Why this matters

For decades, popular explanations of language in the brain often centered on a small number of named regions and treated them as primary command centers. That framework helped organize teaching and clinical thinking, but it also risked oversimplifying how dynamic cognition really is. The new work points in another direction: language may emerge from coordinated activity rather than from a single anatomical hub acting alone.

This distinction matters because language is one of the brain’s most complex functions. It requires the recognition of sounds or symbols, the parsing of structure, the retrieval of meaning, and the integration of context, often in fractions of a second. A network model is intuitively better suited to that level of complexity because it allows different regions to contribute different pieces of the task in parallel and in sequence.

From localization to interaction

The broader significance of the research is that it shifts attention from where language lives to how brain regions interact. A localization model asks which part is responsible. An interaction model asks how information moves, how quickly regions coordinate, and how understanding emerges from those exchanges.

The source text specifically describes language understanding as involving “fast-moving conversations” across regions. That phrasing captures the core implication of the study. The brain may not process language in a simple one-way chain. Instead, interpretation may depend on rapid back-and-forth signaling among multiple areas, with meaning assembled through constant updating.

If that is right, it could influence how neuroscientists frame future experiments. Rather than focusing only on whether one site lights up during a task, researchers may increasingly examine timing, connectivity, and the sequence of interactions that occur as language unfolds.

Potential clinical implications

A more distributed view of language could also matter in medicine. Understanding which regions communicate, and how disruptions in those links affect comprehension, may help refine how clinicians think about injury, disease, and recovery. Conditions that disturb communication between regions might produce language problems even when no single classic language center is completely disabled.

The supplied source does not claim a new treatment or diagnostic method, so any direct clinical application remains ahead. Even so, the conceptual shift is important. If language depends on coordinated networks, then preserving or restoring communication across those networks could become as important as protecting any one brain area.

This may be especially relevant in settings where surgeons, neurologists, or rehabilitation teams are trying to predict which deficits are likely after injury and which functions might be recoverable. A network model allows for the possibility that outcomes depend not just on the location of damage, but on how broadly that damage disrupts communication across the system.

A more realistic picture of cognition

The new findings also fit a larger trend in neuroscience. Many higher-order functions once described in terms of neat compartmentalization are now being revisited as emergent properties of distributed circuits. Memory, attention, decision-making, and now language are increasingly framed as processes that depend on coordinated activity across multiple regions.

That does not make the brain easier to understand. In some ways it makes the challenge harder, because interaction is more complicated than simple localization. But it may also be more accurate. Human cognition is fast, adaptive, and context-sensitive. Those qualities are easier to explain when the brain is treated as a communication system rather than a collection of isolated modules.

The UTHealth Houston work adds to that shift by directly challenging the idea that one region alone can explain language comprehension. Instead, it suggests the brain accomplishes the task through rapid coordination among several regions, each contributing to the larger act of understanding.

What changes next

The immediate value of the study is conceptual. It offers a clearer framework for thinking about language as a network phenomenon. Over time, that framework may shape new experiments, better brain-mapping strategies, and more refined clinical models.

For now, the headline conclusion is straightforward: language appears to rely on rapid cross-talk across the brain. That is a more dynamic and more demanding picture than the old one-region story, but it may also be closer to how the human brain actually works.

This article is based on reporting by Medical Xpress. Read the original article.

Originally published on medicalxpress.com