Lidar is being asked to do more than map shapes

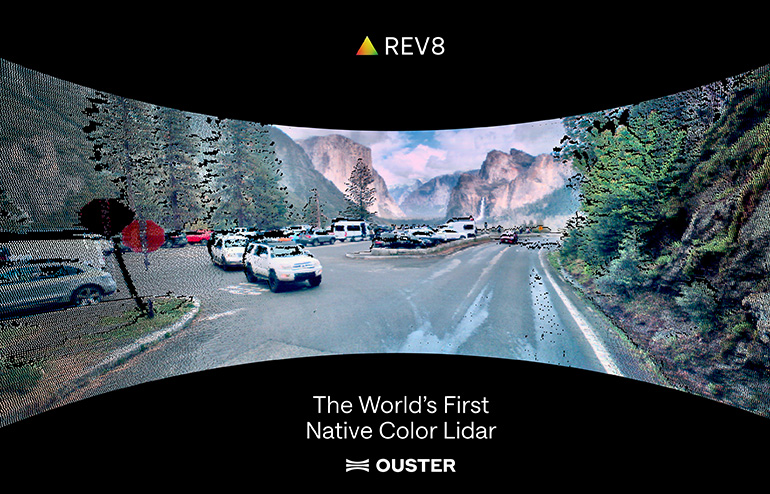

Ouster has launched its Rev8 family of OS digital lidar sensors, introducing what the company says is the world’s first native-color lidar and a substantial jump in core sensing performance. According to the candidate source text, Rev8 uses Ouster’s next-generation L4 silicon and delivers up to double the range and resolution of the previous generation. That combination makes the release notable not just as a component refresh, but as a strategic move in how robotic perception is evolving.

The bigger idea behind the launch is straightforward: machines increasingly need to understand the world with more context, not just more points. Traditional lidar has been prized for structure, distance, and geometry. Cameras provide color and texture. Ouster’s pitch with Rev8 is that bringing native color directly into lidar changes the perception stack itself, giving autonomous systems a richer account of what they are seeing without forcing developers to stitch together as many separate sensor interpretations upstream.

What Rev8 changes on paper

The candidate source text offers a detailed technical case for why Ouster sees Rev8 as a foundational release. The new family is built on L4 Ouster Silicon and includes both 128-channel L4 and 256-channel L4 Max architectures. Ouster says the platform is designed for functional safety, reliability, affordability, and scale. The company also says the new architecture can process color data, provides hardware-enabled HDR, and supports up to 10.4 million points per second with 22.4 gigabits per second of off-chip bandwidth.

Those specifications matter because perception systems have become bottlenecks in many autonomy programs. Better range helps with earlier detection. Higher resolution improves scene detail. Greater processing capability allows more information to be used without unacceptable latency. If those gains arrive together, developers can do more with each sensor position and potentially reduce some of the tradeoffs that have long defined robotics sensing.

Ouster CEO Angus Pacala framed the release in expansive terms, calling Rev8 the most advanced lidar sensor family the company has released and arguing that native-color lidar will give machines “3D human-like sight” for the next era of physical AI. That language is ambitious, but it aligns with the company’s broader strategy of moving beyond being only a lidar component vendor.

From sensor vendor to platform supplier

One of the more revealing parts of the source text is not the color claim but the business context around it. Ouster recently acquired StereoLabs for $38 million, a move Pacala described as part of building a systems or platform business. That signals a larger shift in the robotics market. Companies are increasingly looking for perception solutions that reduce integration burden rather than just selling discrete hardware blocks.

That is especially relevant in what many companies now describe as physical AI: systems that must perceive, reason, and act in real environments at commercial scale. In that context, customers are less interested in arguing about whether cameras or lidar are philosophically superior. They want perception stacks that work. Ouster’s own framing reflects that pragmatism. Pacala said cameras and lidars should not be seen as being at odds and that the right question is which sensor is right for the job.

Rev8 therefore looks like both a product launch and a positioning statement. Ouster is arguing that lidar can become more context-aware while still preserving the structural advantages that made it valuable in autonomy in the first place. If that claim holds up in deployment, the company strengthens its case that future robotic systems will need integrated sensing platforms rather than narrowly defined components.

Why native color could matter in physical AI

The phrase “native color lidar” will attract attention because it speaks directly to one of the core problems in robotics perception: how to combine geometry with semantic context. Structure tells a robot where objects are and how far away they are. Color can help distinguish materials, markings, signals, and scene elements that may matter for navigation or manipulation. Ouster’s argument is that perception improves when those forms of information are unified rather than loosely fused later.

The company says Rev8 represents a paradigm shift in AI perception because full context requires both structure and color. That is a strong claim, but it is strategically coherent. Robots operating in warehouses, streets, industrial sites, or mixed human environments increasingly need more than obstacle detection. They need better world models. Sensors that can feed those models with richer and more tightly aligned data may offer a meaningful advantage.

The market will now judge Rev8 on execution. The critical questions are whether native color materially improves real-world autonomy, whether the performance gains hold under deployment conditions, and whether customers view the system as reliable and affordable enough to support production at scale. Ouster has clearly designed the announcement to answer those concerns in advance, emphasizing safety, reliability, and manufacturability rather than only headline specifications.

That focus is well chosen. In robotics, the distance between an impressive demo and a viable product is usually measured in reliability, cost, and integration effort. Rev8 is important because it addresses all three at least in promise. If the product performs as described, it could help push lidar from a specialized perception input toward a more central role in the broader physical AI stack.

- Ouster launched its Rev8 OS lidar family using next-generation L4 silicon.

- The company says Rev8 offers native-color lidar and up to double the range and resolution of the prior generation.

- The platform is positioned for autonomy, functional safety, and production-scale deployment.

- The launch supports Ouster’s broader shift from component supplier toward perception platform business.

This article is based on reporting by The Robot Report. Read the original article.

Originally published on therobotreport.com